Canvas news

| The FlightGear forum has a subforum related to: Canvas |

This page is primarily intended to keep track of Canvas related core/fgdata changes and discussions, i.e. mainly of interest to people who are not able to follow the forum/devel list regularly, but who may still want to stay up to date regarding future Canvas developments (i.e. not including fgaddon).

Everybody is invited to help contribute to maintain this page and provide up-to-date information. Also, quoting/referincing devel/forum list talks is actively encouraged, too - to help provide the corresponding pointers back to the original discussion threads: Instant-Cquotes.

The following is a list of Canvas related proposals and discussions that have come up over the years, some of these are efforts currently in progress:

- Hackathon Proposal:Canvas Widgets - getting rid of PUI via the Canvas GUI system

- Unifying the 2D rendering backend via canvas - getting rid of 2D Panels and the hard-coded HUD via the Canvas

- Shiva Alternatives - porting Canvas.Path

- Canvas SVG - making Canvas SVG handling faster

- Canvas popout windows - allowing canvas popout windows (Jules came up with related code as part of the CompositeViewer code)

- Canvas instancing - making Canvas displays more efficient

- Canvas Threading - a New Canvas Execution Model) (RFC)

- Canvas sandbox - various Canvas/core related patches that were contributed by various folks over the years

2023

Pango Text

| This article is a stub. You can help the wiki by expanding it. |

On the long hand we need to implement simple, GTK like markup support for canvas.Text, mainly for easy selection handling (I have that working for single-line text, but it's got visual artifacts and it would be incredibly complicated to make it work for multi-line text), but there are other use cases too (like syntax highlighting in the Nasal console, etc.).[2]

Successfully subclassed Pango::Renderer, and I'm also successfully showing an osg::Texture2D as element on the canvas, but when I try to copy an osgText::Glyph onto that texture, instead of a glyph I get a noise texture instead … the other issue I'm having is some ponter problem (a smart pointer of one CanvasPangoText instance only getting deleted upon the creation of the next instance, which leads to it wanting to delete something that was already deleted !?). On both problems I'm somewhat stuck, so it would be great if @James Turner <ja...@fl...> or @Hooray could take a look - the latest code is available at git.code.sf.net/u/thefgfseagle/simgear.git / branch canvas_pangotext, in simgear/canvas/elements/{CanvasPangoText,PangoRendererCanvas}.cxx[3]

And the new element can be tested with the following Nasal code, after checking out the canvas_pangotext branch from git.code.sf.net/u/thefgfseagle/fgdata [4]:

w = canvas.Window.new([200, 200], "dialog");

c = w.createCanvas();

g = c.createGroup();

t = g.createChild("pangotext");

t.setMarkup("<b>bold</b>");

w.show();

PUI Emulation

|

|

01/2023: James slowly working on creating the pop-up menu and combo-box widgets [...] We need to figure out the split of DefaultStyle.nas, but James is very keen to ensure we add a second style alongside the default, before we change much here.

Regarding styling, James is worried about us ending up putting code into the wrong side of the widget / Style split, if we don’t have a second alternative style implementation to ‘keep us honest’[5]

We really have two kinds of ’styles’

- tweaking margins, font sizes, colours etc should indeed be done in XML as you wrote : this is also analogous to styles in the PUI sense

- making radically different ways of presenting the same logical widgets: eg text label of checkbox in a different position relative to the button (of Surface vs Material vs iOS style, if you know web/mobile UIs)

For the second kind we would use a different Nasal style implementation and that’s the abstraction I think it is very important to maintain. It of course going to be a lot of work to make additional styles this way but it’s a very valuable feature to keep the possiblity, since it enables alternate UIs for user accessibility or different presentation modes such as VR.

What I don’t want to do is add lots of widgets assuming we only care about DefaultStyle.nas, and then have an impossible mountain to climb when someone needs an alternate Nasal style. We don’t have to make a complete alternate style now, but I don’t want to take shortcuts the make it impossible in the future. So I want to really think carefully about what is widget code and what is style code. [6]

One of the major things slowing down James' work on the popup-menu and combo box is time to restart the simulator for each change, he is looking for some solution to re-load the widget Nasal code independently. [7]

James has done about 75% (hah!) of the C++ work to enable live reloading of modules this way, but unfortunately there are some code paths that would become crashy if you use the feature for the Canvas: because it would reference the ‘old’ (pre-reload) Nasal code, and not the new one, and therefore you’d get a weird mix of old and new, and then just crash the sim. To fix it properly I need to track down those places that store a reference to Canvas and give them a re-init method, so we don’t keep the stale references.

The general non-fun-ness of that kind of debugging is why I’ve been making such slow progress on my PUI replacement widgets recently [8]

2022

ListView Widget

For the Logbook Add-on, PlayeRom implemented a ListView canvas widget that can be reused by others, so he sent a merge request with this widget.[9]

see also Merge request:Canvas ListView implementation

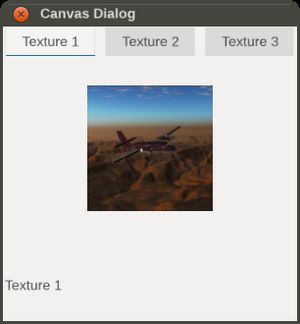

Tab Widget

FGFSEagle needed a tab widget for his C310, so he did a quick implementation inspired by FGAddon/trunk/Aircraft/f16/Nasal/canvas/gui/widgets/Tabs.nas.

The plan is to add scrolling / reordering / closing support for the tabs later. [10]

Learn more at Canvas snippets#Using_TabWidgets

For the merge request, see see Canvas tab widget implementation

For the underlying idea, refer to: PUI#Replacement status

Releasing Canvas textures

| This article is a stub. You can help the wiki by expanding it. |

we'd need some kind of way to delete the texture without deleting the window, redrawing the texture when the window is reopened ? Starts to sound complicated … but on the other hand, all windows on the Extra 500 use a custom Window class which does something similar … [11] I've updated the code to directly hide the window when the close button is pressed, and also fixed a bug which consisted of the windows automatically showing (which is not desired when the window is constructed at startup and should only be shown later). New patch attached. As for memory usage, I did a test - 100 1000x1000 px canvas windows, all with destroy_on_close = 0. constructing them didn't give a significant increase in memory usage - only upon showing them all at once, the RAM usage went up by 2 GB. that's quite a lot you might say, but 1) most dialogs are not as big as that and 2) which user opens 100 dialogs in one session ? In most cases it won't be more than 10 = 200 MB RAM (if all dialogs were using this new behaviour) - and the default behaviour is to destroy the window on closing anyways (destroy_on_close = 1 by default, you got to explicitly set it to 0 to get the new behaviour, also to maintain backwards compatibility), so I think this won't be an issue.[12]

What we’d ideally want is a C++ change so that hidden canvases release their texture memory : it’s created the first time the canvas is rendered, which is why the memory is fine until you actually show them.

200MB memory is not great, but the bigger problem is the GPU-side memory : 200MB of VRAM is much more ‘valuable’ for textures than for keeping the backing store of hidden dialogs. So I would definitely say use this feature sparingly, until someone adds some C++ logic to destroy the backing texture for hidden canvas windows.[13]

For this window behaviour one, I’ve applied it since it’s an opt-in change, but I suspect we may end reviewing this API a little, so I’d suggest not building lots of dialogs across different aircraft, which depend on this behaviour, until we get a bit more experience with it.

So use it, gain some understanding, but maybe we discover in 6 months that it’s a bad idea and have to revert it. (That’s the worst case, but it is kind of a radical change in the lifetime / memory usage of the Canvas, so I just want to mention the worst possibility) I’m 90% sure we can keep this API, so long as we improve the C++ behaviour to release the texture / FBO memory of hidden canvases, which is a reasonable thing to do anyway, it just needs someone to have the time to implement that, and right now, I certainly don’t.[14]

Replacing PUI

Update 09/2022: The PUI replacement was going to be Qt but it started to get very complicated with changes in Qt 5.15 + Qt 6, so James is going with a more light-weight, Canvas-based, approach now.

Qt added support for Vulkan / Metal / D3D starting in 5.15, but FlightGear / OpenSceneGraph can’t support those, so integrating the two renderers went from being ‘complicated but ok’ to ‘very very complicated’.

So now James is going with something much more lightweight using some C++ compatibility code, some Nasal for styling and the existing widget rendering from Thomas Geymayer (TheTom) with some extensions and additions: some pieces are in FlightGear & FGData already.

James has basic dialogs working okay but not the more complex ones and everything looks kind of ugly, he needs to improve the visual look before he shares screenshots to avoid everyone freaking out :) The disadvantage of this approach is James is far from expert at creating visual appearances this way, so it’s kind on unrewarding and slow for him. If someone likes messing with CSS-type styling, border-images and hover-states, ping him since we could probably move things also faster [15]

The PUI limitations should be gone ‘soon’: actually James is now focused on getting dialogs working; on macOS we use a native menubar (not PUI) inside FG which can handle sub-menus just fine.

If someone out there wants to work on the UI for menus / menubar part, just ping him directly, he can suggest some steps to start working that way. (Basically creating the view code in Nasal for menu items, menus and the menubar, and handling the different visual states of the above, such as an item being disabled, showing the keyboard shortcut, eliding too-long item names, hover feedback, timers to open menus / sub-menus on mouse-over: it’s all pretty standard stuff)

This does *also* need C++ parts, because the internal menubar structure /also/ needs some additions to support sub-menus, and the add-ons themselves would likely need some tweaks in the XML to integrate with that. So in total there are quite a few changes in this ’simple’ request, but this is often the way with software development :)[16]

|

|

In February 2022, James reported having something in progress locally, and that should even be something we can try in March/April 2022. Hopefully that’s quick enough, knowing it has been a long time coming.[17]

It’s split between Simgear (see classes with widget / layout in the name) and in FGData. (Eg widgets/Button.nas) To be able to use the existing dialog XML files un-modified (which is a design goal), James is extending the widget types with many additional ones (eg PUI has slider, dial, combo-box, checkbox, all of which need to be created - this is about 30% done and is the bit he's very slow at). Thomas’s widgets have a very good separation of API + state from appearance, so all styling is in its own file, and James is being very strict about maintaining this separation, so we also retain the re-styling feature of the PUI UI, which many people also rely on. This does make the process of adding new widgets more complex, however.

The other thing is to preserve all the layouting : James has added a grid layout to Simgear, since that is supported by the existing PUI code (even though the layouts are not actually part of PUI itself). The problem is getting the sizing / hinting of all the widgets to match the PUI values, so that dialogs look approximately the same under the new UI as they did with PUI; again this a design goal so that all existing dialogs in aircraft and add-ons, which we can’t update, continue to work and be usable. Debugging that is also proving quite tricky, since there’s all kinds of hard-coded assumptions built into PUI widgets about pixels, font-sizes etc which are not true in the new system.[18]

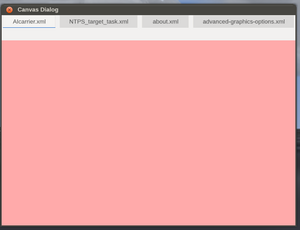

XMLDialog.nas

|

|

There's now an XML to Nasal bridge, to keep PUI dialogs working (Disabled by a CMake option) This is implemented on top of the Canvas system.

James reported having something in progress locally, and that should even be something we can try in 2022. Hopefully that’s quick enough, knowing it has been a long time coming.[19]

The new code builds equivalent C++ objects to what the PUI dialogs build, with properties exposed to Nasal.

Peer objects are created by Nasal callbacks, which can implement the various dialog functions needed to keep compatibility, especially the ‘update’ and ‘apply’ hooks.[20]

Use the following cmake option to enable this code: -DENABLE_PUICOMPAT=ON

The new Compatibility files can now be found in $FG_SRC/GUI:

- flightgear/src/GUI/FGPUICompatDialog.cxx

- flightgear/src/GUI/FGPUICompatDialog.hxx

- flightgear/src/GUI/PUICompatObject.cxx

The corresponding Nasal/Canvas module to dynamically "translate" legacy PUI/XML dialogs into Canvas dialogs (at runtime), is to be found in fgdata/Nasal/gui/XMLDialog.nas [21]

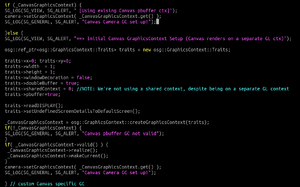

Core Profile support / replacing ShivaVG

|

|

We need to remove PUI and change the Canvas not to use ShivaVG (Canvas Path back end), to be ES2 compatible or Core-profile compatible.[24]

To support Canvas on OpenGL Core profile, the plan is to migrate from Shiva to ‘something else’ which implements the required drawing operations. Unfortunately two of the probable solutions (Skia from Chrome and Cairo from Gtk) are both enormous and a pain to deploy on macOS and Windows.

As part of the Core profile migration, we need to replace ShivaVG (which is the functional guts of Path.cxx) with a shader based implementation, ideally NanoVG, although Scott has indicated this might not be as easy as originally hoped. [25]

Early experiments (patch [2]) in 02/2022 with ShaderVG were promising and suggest, that it ‘kind of sort of’ works, that’s already less work than integrating NanoVG.[26]

For the time being, it's not a one on one drop in replacement but it works quite nicely:

- For one the x,y positions are off comapared to ShivaVG making things slightly bigger (maybe too big for the screen).

- and one issue with the "title bar" being opaque.[27]

2021

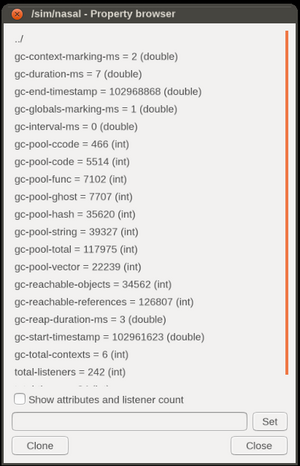

Moving Canvas Rendering to a separate OpenGL Context

|

|

Early experiments moving Canvas rendering out of the main loop by using aggressive OSG threading in conjunction with a separate GL context for Canvas cams, and subsequently, with also moving Nasal (and Property Tree I/O) out of the main loop by using a dedicated thread per Canvas [Texture], probably with its own FGNasalSys instance and related subsystem machinery (mainly, events/timers).

Dedicated prerender cams

2021-08-27: Fernando has begun working on moving Canvas cameras out of the scene graph into dedicated PRERENDER cams in the viewer (This was a long-standing issue where Canvas cameras were rendered multiple times, once per slave camera. In the case of the Classic pipeline they were being rendered twice.), see:

2020

Canvas EFIS Framework

The canvas EFIS framework (created by jsb) is by-product of the EFIS development done for the CRJ700 family, which uses Rockwell Collins Proline. It is published as a separate framework in the hope that it is useful for other aircraft as well, however some features may be rather specific. The biggest motivation and primary design goal was to support "near optimal" performance but that does not come automatically of course.

|

|

2019

placeholder for next year

2018

Compositor

|

|

Icecode has been working on and off on multi-pass rendering support for FlightGear since late 2017. It went through several iterations and design changes, but he think it's finally on the right track. It's heavily based on the Ogre3D Compositor and inspired by many data-driven rendering pipelines. Its features include:

- Completely independent of other parts of the simulator, i.e. it's part of SimGear and can be used in a standalone fashion if needed, ala Canvas.

- Although independent, its aim is to be fully compatible with the current rendering framework in FG. This includes the Effects system, CameraGroup, Rembrandt and ALS (and obviously the Canvas).

- Its functionality overlaps Rembrandt: what can be done with Rembrandt can be done with the Compositor, but not vice versa.

- Fully configurable via a XML interface without compromising performance (ala Effects, using PropertyList files).

- Optional at compile time to aid merge request efforts.

- Flexible, expandable and compatible with modern graphics.

- It doesn't increase the hardware requirements, it expands the hardware range FG can run on. People with integrated GPUs (Intel HD etc) can run a Compositor with a single pass that renders directly to the screen like before, while people with more powerful cards can run a Compositor that implements deferred rendering, for example.

Unlike Rembrandt, the Compositor makes use of scene graph cameras instead of viewer level cameras.

This allows CameraGroup to manage windows, near/far cameras and other slaves without interfering with what is being rendered (post-processing, shadows...).

The Compositor is in an usable state right now: it works but there are no effects or pipelines developed for it. There are also some bugs and features that don't work as expected because of some hardcoded assumptions in the FlightGear Viewer code. Still, I think it's time to announce it so people much more knowledgeable than me can point me in the right direction to get this upstream and warn me about possible issues and worries. :)

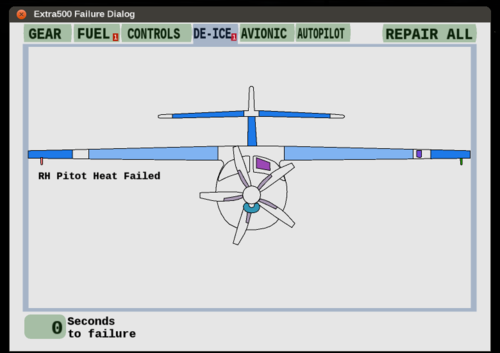

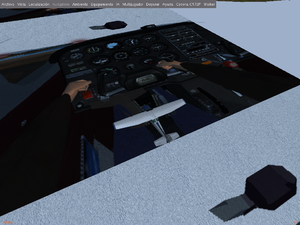

Aircraft Dialogs

Some of you may already know that the current tool to generate the dialogs (PUI) is going to disappear in the mid-future. After some (partially controversial) discussion, there seems now to be some support for the idea that canvas is a good tool to generate aircraft-specific dialogs in the future [28] (as it allows to tailor the dialog closely to the plane and also, canvas being canvas, the UI can smoothly mesh with the 3d models, so you can project a canvas checklist onto a 3d model in sim for instance rather than a popup window).

Thorsten would very much like to claim to be pioneering this approach, but in fact he believes the Extra-500 team is - look at the failure dialog of that plane where you can click the components you want to fail and you see what he means!

Anyway, Thorsten has started to roll out a few designs of his own and try to keep the tools fairly general so that they can be re-used by others- so here's the revised version of the Shuttle propellant dialog.

The general idea is to use semi-transparent 'content gauges' on a background raster image to show where the tank is located and how full it currently is - double-clicking any tank will bring up a detail window which allows to set the content (here propellant and oxidier separately, this is rocket fuel...) and also shows the current tank pressures and temperatures. The whole thing can readily be applied on top of a different raster image with a different number of tanks - you just instance and position the labels and 'gauges' you need - in fact placement is probably what's going to take longest (at least for me). The whole thing is currently in flux [29] If anyone wants to follow the development or use the code, it's here: https://sourceforge.net/p/fgspaceshuttledev/code/ci/development/tree/Nasal/canvas/canvas_dialogs.nas

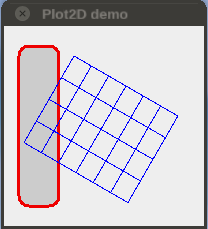

Plot2D

2017

Vertical Situation Display

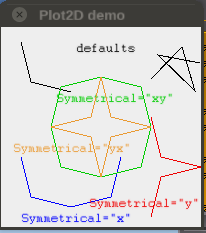

rleibner is developing a graph class that works using local (customized) coordinates and calls the plot2D helpers (see below). For testing (and as exercise) he came up with a VSD (Vertical Situation Display): [32]

Plot2D Helpers

Plot2D is nothing more than a collection of helpers that aims to facilitate the task of coding. It makes intensive use of the Canvas API, a mandatory reference for those who intend to refine the result beyond what is offered by plot2D.

It is assumed here that you already have a minimal knowledge about Canvas.

For now Plot2D resides in the SpokenGCA addon, but the idea is that in the future it could be included in the $FG-ROOT/Nasal/canvas directory.

Continue reading at How to manipulate Canvas elements ...

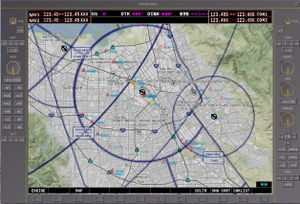

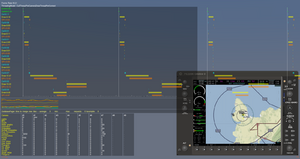

FG1000

Here is a little update o the G1000 emulation. Stuart has now got the key MapStructure layers in place, though they are not styled correctly for the G1000 yet and Stuart would like to replace some of them (airspace in particular) with vector data, and started on the MFD architecture. For prototyping he hacked together a PUI dialog box, which speeds up development massively as it reloads all the Nasal code each time it's opened.

There's a screenshot of it here:

There's also a wiki page of the current status here: http://wiki.flightgear.org/FG1000 Next steps are to get the MFD architecture in place - this will probably require some changes to Richard's generic MFD to support buttons that don't change the MFD pages better. Stuart has not yet pushed this to fgdata - he'll wait until he's happy with the overall architectures so that there is a solid base for anyone else interested in helping out.[34]

CanvasWidget and PUI

James pushed a change which changes how we integrate PUI into the renderer and other systems [35]; this makes our PUI usage more modular, so it can be enabled / disabled in a standard way. It also renders PUI via an FBO so we can use it safely (and scale it) on HiDPI screens, since PUI is too old to support a scaling factor as more modern UI toolkits would do. I’ve done a fair amount of testing, and everything seems to be working, but if you see any changes in how PUI reacts to mouse or key presses, or the appearance of things, please let James know via the issue or devel mailing list. It’s using the same code as it always did but starting from a slightly different place, both in terms of drawing and event handling. One temporary regression: right now CanvasWidget (the mechanism by which we include canvas content into PUI) is messed up because previously PUI had no alpha channel, so the canvas’s alpha was ignored.

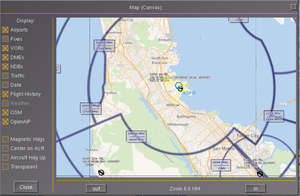

With the new system, the alpha is actually being used, but this is not really desired - it shows up as a semi-transparent map background in the ‘Select airport’ airfield chart, and the Map-Canvas window at least. James thought he had a work-around for this, but the one he came up with is awkward to support on Windows, he still needs to think on a better solution, so in the meantime he pushed a hack so at least Windows still builds and runs [36]. (If your canvas content inside PUI has an opaque background, you’ll be fine, so one fix is just to adjust the Canvas code for those dialogs, but James would like to find a backwards compatible fix)[37]

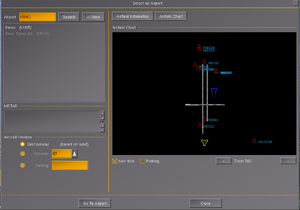

MapStructure used by airports.xml

Stuart has committed some changes [38] to update the Select Airport dialog to use Canvas MapStructure Layers to display airport information, rather than the now deprecated map layers. The change should be largely transparent to end users - the only significant change is that your can display navigation symbols. This is all part of a long-term effort to provide the building blocks for a Garmin G1000 - these layers could be used for the airport display on the MFD, and could easily be combined with the APS layer to show a moving aircraft.[39]

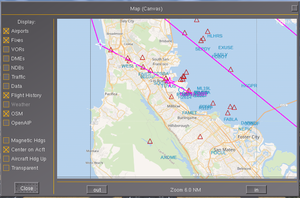

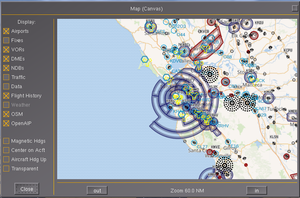

Canvas Slippy Maps

Stuart wants to build a set of Canvas MapStructure layers for a G1000 implementation - the canvas map UI is really just a way to display it.[43]

- SimGear: https://sourceforge.net/p/flightgear/simgear/ci/a800189c25a3fb4610c1e36655940a95694c4a84/

- FGData: https://sourceforge.net/p/flightgear/fgdata/ci/85b7665c19ebaae02d746fef53f25f8d8974eb13/[44]

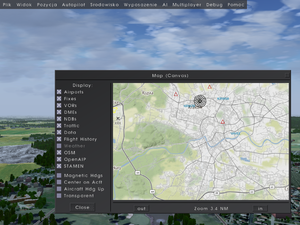

Stuart added some new Nasal Canvas MapLayers to support Slippy Maps, as used by most web-based mapping services such as openstreetmap. This allows us to display sectional charts (for the US - vfrmap.com), and airspace information (courtesy of openaip.org), as well as a openstreetmap data. The canvas Map dialog has been updated to support these layers. Map data is retrieved over http and cached locally.[45]

fgdata with OpenStreetMap, OpenAIP and VFRMap layers for the Canvas Map Layer system, which can be seen on the canvas map dialog. They use a generic Slippy Map OverlayLayer Map Layer. Additional web-based mapping can be trivially added by creating a new lcontroller. See Nasal/Canvas/map/OpenAIP.lcontroller for an example. Stuart has also added a Web Mercator Projection to the Canvas Map support.[46]

Canvas VSD / Vertical MapStructure Layers

A few years ago, Omega95 created a Canvas VSD

Recently, clm76 adapted Omega95's original Canvas VSD to the CitationX[48]

Canvas + Effects goes Deferred Rendering

Icecode GL has been experimenting with effects/shaders support for Canvas and got something kind of dirty and primitive but it works:

It works similarly to how Rembrandt does its buffers. There is a new texture type called "canvas" that allows shaders to access any canvas texture via a texture unit, just like you'd access a normal texture from the hard drive. This removes (most) limitations of addPlacement, which can only substitute the "base" texture (unit 0). The posibilities are endless, it just needs some care and work. At the moment we could have shadow mapping outside Rembrandt just by creating a new view placed at the Sun and make it render the depth buffer to a canvas. Then every ALS shader could access this canvas and do the shadow comparison thingy. Planar reflections in the water should be kind of trivial as well, it'd just require the model-view matrix to be multiplied by a reflection matrix and the water shader could just paste the result over the water surface (with some more fancy calculations to make it pretty of course).[50]

Canvas & Slave Cameras

Former FlightGear core developer F-JJTH shared a patch [53] implementing a proof-of-concept to render an arbitrary slave view to a FBO/RTT using a new dedicated "camera-display" instrument. This would be the kind of functionality required to implement many interesting features that cannot currently be added easily; Rear view mirrors in fighters could become a reality, as well as camera displays in SAR helicopters, outside views in airliners, backing view displays in cars etc.[54]

The patch seems not to break anything else, and does nothing unless the aircraft side code is added. It is therefore reasonably safe to add the patch to your working copy of FG. No git branch expertise is required.[55]

Once the patch is applied and the binary rebuilt, all that is needed is to add the new camera-display to an existing cockpit panel:

<camera-display>

<name>cd</name>

<texture></texture>

<enabled type="bool">true</enabled>

<view-number>0</view-number>

</camera-display>

For view configuratoin, it's using the standard view-manager interface, so you primarily need to set the view-number property to change the view (untested, feedback is just based on looking at the patch in question).

Specifically, when running the patched fgfs binary, look for: /instrumentation/camera-display and the view-number property underneath[56]

The current state is... well, it "works" ;-) However there are some bugs:

- The image jumps all over the place when the aircraft is moving.

- The colours are wrong.[57]

- The colours are wrong with Rembrandt. It looks as if the wrong buffer is being used.

- Jumping around is the same with and without Rembrandt.[58]

The jumping around effect seems to be in all views. The control tower view jumps back and forward, showing the whole tower at times.[59]

before looking at some of our most advanced use-cases, it's best to tinker with a really simple scenario, to see how well this works, and what needs to be polished.[60]

if anybody is interested in working out what is missing or what can be improved, we should review working features doing similar things, e.g. the screenshot/streaming handlers or the camera setup routines used by CameraGroup.cxx itself.[61]

The patch itself seems overlapping with Zan's original "new-cameras" effort, as mentioned at: Canvas Development#Supporting Cameras If this patch should work well enough, it would probably make sense to integrate it with the Canvas system to come up with a dedicated "camera" element for the Canvas system, that would be configurable using the format/properties used by the view manager: Howto:Configure views in FlightGear At that point, it would actually be a terrific idea to review Zan's original patches and see if any of the features there could/should be integrated with the new Canvas::Camera element - like you say, once this is properly working, "the sky is the limit" (or not even that, think shuttle cams)[62]

Thus, the patch needs some TLC - however, it's definitely a very solid foundation, and very compelling as a prototype, especially given that the patch is pretty lightweight.

Much of the OSG machinery to set up the FBO/RTT (render to texture) is overlapping with Canvas functionality, so we could do away with that by using a dedicated Canvas element (which may fix some issues, e.g. colors being off).

Besides, if/when we actually use the approach used by Torsten's screenshot handler routines (mongoose/Phi), the "jumping" may also be gone (just a guess). At this point, we need to get more people involved who are able to patch/rebuild sg/fg from source and test these patches, so that we can come up with a team of folks to help with testing/developing. Hooray can certainly help with the Canvas side of this, which means that the FBO/RTT setup routines can be simplified, and he can help turn the whole thing into a dedicated Canvas "camera" element.

G1000 & MapStructure improvements

|

|

As of 09/2017, Stuart started making some tentative steps towards a full Garmin G1000 simulation, he started with the excellent xkv1000 as a starting point, particularly for the PFD which very closely mirrors the G1000.

For the MFD he's planning to use the Canvas MapStructure layers (obviously). One area he is going to have to develop is tiled mapping, which the MapStructure in fgdata doesn't currently support as a layer.

Stuart's thoughts are:

- Create an OverlayLayer layer with a controller that is analogous to the SymbolLayer, but likely with a simple update method rather than SearchCmd, and no .symbol equivalent

- Convert the tiled map example from the wiki, but also other layers such as a compass rose, airport diagram, lat/lon grid.

Stuart is currently undecided on how to handle the projection - whether to have the layer itself have to interpret the centerpoint of the map and scale, and then make a projection itself, or to provide it from the Map. The latter would undoubtably be cleaner, but for something like a tiled map he suspects that the management will need to be quite low down the stack.[64]

Stuart is getting to grips with the Canvas MapLayer stuff, and in particular rendering layers from a map service. One layer he is wanting to add is airspace information, so it's very good news that this is available. From the looks of things Stuart may just be able to plug in the Slippy Tile URLs and render all the airspace in-sim in a Canvas map.[65]

Property I/O

As a first step towards developing a G1000, Stuart Buchanan has been looking at Nasal property read/write performance, as he understands that this is a bottleneck for developing complex Canvas applications.[66]

For well-organized code (aka writing only when needed, organizing the displays in suitable groups, staggered updates...), it's going to be fast enough. For a straightforward approach to complicated displays probably not. A thousand properties sounds a lot, but every update in translation is already 2 properties, combined with a visible flag and color information you're down to 166 elements you can update already. If each of them is driven by reading a value from the tree you're losing even more. Generally, if there is a way of making either method faster, it would affect performance in a positive way all over Nasal scripting because currently property I/O is in my view the largest bottleneck (especially of canvas).[67]

low-hanging fruit in the canvas API:

- add more calls like updateText (updateTranslation, updateRotation,...) which check whether the property to be written has changed at all against a Nasal record before actually doing property I/O

- make the updateXXX methods store the node reference and use that rather than construct it afresh

If the test suite numbers hold up in reality, this ought to give a factor 2 to <few hundred> (dependent on how often the argument actually has to be written - at least Thorsten was able to write 50 million numbers into a Nasal array much faster than 50.000 numbers into properties).[68]

it’s easy to add native helpers to Canvas to set some properties from code directly, rather than using the canvas.nas wrappers which then use props.nas internally. This could bypass a whole ton of Nasal if that’s the issue. Of course this code will still have to update properties from C++, so we should rather do the analysis first to see where the slowness occurs, since this could either be a very big win, or completely pointless based on that measurement.[69]

Moving map/RNAV discussion

The airspace system is in the process of changing drastically[70]

and there's an interesting discussion that was started by David Megginson regarding implementing G1000-type systems on the mailing list: https://sourceforge.net/p/flightgear/mailman/flightgear-devel/thread/CAKor_TGugT%2Be%3DQhdNBqWq3Pn%2BCEq6iLZsgaB7HmiU%2BBoDK0vxQ%40mail.gmail.com/#msg35924395[71]

When you're flying an IFR approach with a GPS navigator, you can use RNAV for the transitions, but need to switch to the ground ILS transmitter for the actual approach (they both use the same CDI on many planes, so it's easy to miss). The GTN 650 that I'm installing in my plane will switch CDI mode automatically under certain conditions, but it's not guaranteed. In the past, people could practice the hell out of these kinds of details in FlightGear (tuning, twisting, and identifying all the navaids, adding crosswind corrections, etc) so that they were habits when I flew them for real, but we can't practice RNAV procedures like this (or many others).[72]

this is something we need to address, as our GA aircraft will otherwise become more and more old-fashioned.[73]

FlightGear will still be great for people who want to practice the mechanical parts of flying (e.g. crosswind wheel landings in a Cub), but will slip further and further behind for people who want to use it for real IFR practice.[74]

zakh on the forum has been developing a Garmin G1000 clone called thekv1000: https://forum.flightgear.org/viewtopic.php?f=14&t=32056 [75]

the G1000 has multiple pages of data with data entry for flightplans etc. Stuart is sure there is a lot that has been learned by the Shuttle team that can be passed on as "best practise". The Route Manager should be able to handle most of the "magenta line" tasks, but it may be that the more complicated routing such as the RF approach, fly-by vs fly-over requires some new autopilot coding as you describe.[76]

The MFD framework that is used on the Shuttle is more than capable of supporting a most of the requirements; Canvas is capable of rendering the displays. So it's possible to do this[77]

A number of contributors expressed concerns regarding the performance of the Canvas system when it comes to implementing sophisticated avoinics like G1000-style systems. However, the fact that there are people who code canvas like there's no tomorrow and update 10 elements where an update of the group position would do the trick at a fraction of the cost doesn't mean that canvas or Nasal are slow - it means inefficient code is slow, and that's just as true for C++. Nasal as such is fast, and compared with the cost of rendering the terrain, rendering a canvas display is fast on the GPU (you can test by rendering but not updating a canvas display), and unless you're doing something very inefficient, calculating a display but not writing it to the property tree is fast (you can test this by disabling the write commands). It's the property I/O which you need to structure well, and then canvas will run fast. And of course the complexity of your underlying simulation costs - if you run a thermal model underneath to get real temperature reading, it costs more than inventing the numbers. But that has nothing to do with canvas.[78]

Structured in a reasonable way (i.e. minimiing property I/O in update cycles, avoiding canvas pitfalls which trigger high performance needs etc. ) canvas is pretty fast (kind of reminds me of the old 'Nasal is slow' discussions... it's still the same things which are slow, except canvas hides it behind some abstraction - understand property I/O, and it'll run fast)[79]

The G1000 is a fairly significant piece of computer hardware that we're going to emulate. It's not going to be "free" particularly for those on older hardware that's already struggling. However, hopefully we can offload a chunk of the logic (route management, autopilot/flight director) to the core, and do things like offline generation of terrain maps to minimie the impact.[80]

For starters, Stuart is hoping to to optimize the property access and become more familiar with the Canvas C++ code so that he can help bridge the cap between core and Canvas client developers. He thinks James has some thoughts on the Canvas C++, so he'll want to coordinate with him as well.[81]

One of the ideas raised by David is, should there maybe be a separate project to build an embeddable GPS navigator with different faces/UIs (and a default standalone front end)? As others have mentioned, a lot of the backend logic is the same, like doing a gradual transition from RNAV to Localiser on intercept to avoid a sudden jolt in the A/P, or calculating a turn past a fly-by waypoint. Again, that would be a way to join forces with other Sim dev communities. [82]

if we wanted to build a tablet-based emulator ourselves, we could at least join forces with the FSX and X-Plane communities to do it to grow up the pool of talent.[83]

Groups and Colors

Thorsten learned something rather important about canvas: After doing canvas a while, most of us love the concept to suitably group things and then translate or hide the whole group, which is a very performance-friendly way to get the job done compared with moving all elements.

Thorsten had the situation that the Shuttle HUD has several de-clutter levels which are progressively hidden as the de-clutter function is used. So, he introduced dc0 to dc3 as the groups and operate with selective setVisible() on them according to chosen de-clutter level.

Doing brightness adjustment of the HUD by setting color on the group (specifically the alpha channel).

Unlike translations, rotations or visible statements acting on the top level of the group, this one seems to be passed recursively down the child elements, leading to a huge property I/O.

Anyway, if you know what it does, it's a very useful function still - just it can't be used in a performance-critical place, and it should not be confused with performance-friendly group operations.[84]

Canvas GUI

James is planning a bit of work in the Canvas GUI/widgets area but in the medium term (mid 2017), he wouldn't recommend making lots of extensions to the current Canvas GUI toolkit because he wants to give the whole Canvas-GUI architecture a review and sanity-check first. For the time being, he has no idea if this will be compatible or incompatible with the existing GUI controls, or even be successful. [85]

Hhis intuition is the current style system is overkill, but that the layout code will need some enhancements. So he would avoid both those areas for now. Adding missing widget types is probably safe, but if you propose them here we can discuss their API to hopefully keep it stable even if the implementation changes.[86]

Skia talks

James announced he wants to replace the vector drawing routines with another package which was designed with SIMD in mind[87]

Blend2D is quite amazing for a technical point of view: it compiles native CPU code (including SIMD) for every draw operation using a JIT compiler.[88]

The idea is to use Skia (from Chrome), actually, since it has software and hardware backends. This would allow switching based on which gives better performance on a given machine, and easy porting to other devices, such as Android. The remote-canvas code is a proof-of-concept for this, the aim would be to use the same protocol to render the canvases in different threads.[89]

After reviewing Skia (including reading the sources) and it seems like a very promising fit, albeit with the caveat that it is kind of huge. Blend2D looks pretty good but less mature than Skia, and most importantly, lacks a hardware renderer which James thinks is critical to support devices such as the Raspberry Pi and cheap Android tablets, which James expects to be a very good target for the remote canvas (an RPi driving a wide-screen panel via HDMI could do both the PFD and ND of a Boeing/Airbus setup, but that’s 1920 x 1080 pixels which is too much to fill in software at 60Hz) Anyway, at this point the code is small enough that experimenting with one renderer or another (Cairo also) is probably a weekend’s hacking, if anyone cared to try. James is currently working on hardware acceleration using QtQuick, since we already have Qt as a dependency.[90]

2016

SIMD

|

|

Erik is experimenting with streamlined Canvas rendering using Single Instruction Multiple Data (SIMD) instructions which is able to calculate on 4 floating poit variables simultaneously (e.g 3 or 4 value vectors). For the F-16 map this gives a speedup from 12+ seconds delay rendering at the first select down to 6 seconds delay.[91] [92]

A few users have reported a noticeable increase in framerate on their computers, e.g. on some desktop the framerate has doubled[93].

SSE and NEON work best when considered in the design stage. Adding it afterwards is always suboptimal. Thus, Erik might still update it in a place or two but this work isn't intended to detract from adding Skia.[94]

Porting the legacy HUD

James is planning to port the C++ HUD to use Canvas for its rendering soon (next few months), but he plans to keep XML compatibility (so aircraft don't see any change) and will keep the placement logic relative to the view, for the same reason. [95]

2D Panels

James is working towards migrating 2D panels to use the Canvas[96].

This step was inspired by a discussion that originally took place on the devel list, i.e. we should move the 2D panel and HUD rendering over to this approach, since that would get rid of all the legacy OpenGL code besides the GUI. [97]

Moving the HUD to use the Canvas would be a great step from his point of view, since it and 2D panels (which he is happy to write the convert for!) are the last places besides the UI which make raw OpenGL calls, and hence would benefit from moving to the Canvas (and thus, to use OSG internally) [98]

The original goal was to identify common requirements to seee how we manage XML / property-list file processing in a nice way, to support the various formats we want to create canvas elements from - GUI dialogs, 2D panels and HUDs.[99]

James is planning in the next couple of weeks to port the panel code to generate Canvas elements, and then submit my fgcanvas utility to render any Canvas remotely, That code currently users software rendering (too slow for the RaspberryPi he expects), but upgrading the code to use OpenGL ES2 rendering is next on his todo-list so he can fun on Android / iOS devices as well as Raspbery Pi. If you’re interested in working on that please let him know, he would be glad of the help.[100]

James has done most of the work to port the 2D panels to use Canvas in an experimental branch[101], and will do the C++ HUD next, and hence is creating Canvas elements bypassing the Nasal API.[102]

Skia backend

James is also currently looking at making a Skia backend for the Canvas (to replace Shiva, making a solution using Skia (https://skia.org) as the backend, because this would give good performance via its OpenGL backend, make people who don’t want to use Qt happier, and could also replace the OSG rendering of the Canvas within FlightGear itself, allowing us to ditch ShivaVG (which is unmaintained) and probably improve performance substantially. (Skia can do SSE3/NEON optimised software rendering in a background thread, so Canvas wouldn’t eat into our OSG / triangle budget)[103]), and Skia already has quite intensive SSE/NEON optimisations, in addition to an optimised OpenGL render which he expects to be substantially more performant than the current OSG Node/Drawable hierarchy.[104]