Canvas Threading

| in a typical airliner, you have several EFIS screens, each with its own, largely independent Nasal code to drive a canvas surface for it; and then you have various subsystems, many of which are also mostly independent. They could all run in parallel, coordinating their activities via a thread-safe property tree.[1] — tdammers

|

| we should implement multi-processing or multi-threading using message passing, and mirrors of the property tree. (And hence no concerns about locking at all!)

Rough design is:

(so on a non-main thread, when you try and do ‘setValue’ on a property, we queue a ‘property-assign’ command and send it to the main thread) [2]— James Turner

|

| it would be nice if the state of the canvas can be serialized easily and with only little data into an other application. That is to be able to set up multiple viewer applications all displaying the same content. Think of an mfd that is shown in a bigger multi viewer environment. This should be efficient. How to achieve this efficiently requires a lot of thought.[3] — Mathias Fröhlich

|

Objective

Experiment with moving Canvas rendering out of the main loop by using aggressive OSG threading in conjunction with a separate GL context for Canvas cams, and subsequently, with also moving Nasal (and Property Tree I/O) out of the main loop by using a dedicated thread per Canvas [Texture], probably with its own FGNasalSys instance and related subsystem machinery (mainly, events/timers).

Status

| The FlightGear forum has a subforum related to: Canvas |

RFC (03/2020)

Multi-threading in Nasal is supported and possible - however, you could basically say that people can only use it properly if they have a strong background in programming and/or in FlightGear internals - otherwise, it's unlikely that people can use it successfully - because the rest of FlightGear is basically single-threaded, and that also applies to all extension functions and FlightGear specific Nasal APIs.

Basically, it's not feasible to introduce multi-threading at a later time, unless you have a strong background in software engineering. However, designing your systems with threading in mind upfront can work out reasonably well - but obviously you need to work out the data flow and dependencies between different code routines.

The only person to really optimize all of the aircraft successfully, including Nasal code, is Richard and he happens to be a FlightGear core developer, and also has a background in computer science - and certainly must have been using FlightGear for 10+ year

However, Richard has written a number of helpers which he is in the process of sharing, i.e. adding to fgdata - so that others, less familiar with fg internals, can also use these helpers.

Speaking in general, you almost certainly don't want to use multi-threaded Nasal code - unless you have a corresponding background, or at least know Nasal/the property tree and the SGSubsystem architecture inside out.

Sooner or later, it seems rather likely that Canvas based avionics may optionally get their own/private Nasal instance (and property tree) per Canvas - so that the corresponding scripts can run outside the FlightGear main loop. Under the hood, a Canvas is already primarily a property tree - one that watches certain properties/locations, and that maps reads/writes to the corresponding OSG APIs.

Proceeding "a is" simply isn't viable for FlightGear as a whole, because the way Nasal and the Canvas system work, there is more and more rendering code tied to non-deterministic code (among others due to Nasal's garbage collector). However, Canvas based avionics have a well-defined set of inputs (think properties, and calling certain FG extension functions, e.g. to query the navdb) and well defined outputs (usually just a single FBO/RTT texture).

Thus, conceptually the code used to update such a texture does not need to run inside the FlightGear main loop. Especially when keeping in mind that a typical cockpit may have 6+ of these FBO textures, all of which are currently updated by Nasal script running at frame rate inside the main loop.

The shared requirement these update routines is that they require a state vector of properties and API calls - some are fixed (i.e. always the same properties), whereas others are dynamic and may change depending on the mode/context in question (imagine showing different modes/elements of a PFD/ND).

However, our experience when designing the MapStructure/ND frameworks has been that regardless of the number of instruments, it isn't feasible to always poll/getprop properties during each update cycle - instead, it makes sense to use memoization (caching) - Richard came up with a dedicated framework for that, and also for splitting work across multiple frames.

This is an approach that the MapStructure framework also used (instead of threading).

Thus, if you were to render n 10+ instances of a ND, it would be kinda pointless to do so at frame rate while always polling /position/*, /orientation, and /fdm/* - likewise, using listeners would not be a good idea for state that changes per frame.

In other words, what's needed is a partioning mechanism to subscribe to relevant state, and then only do the fetching once (per update cycle), where all subscribing avionics would merely get a copy of the state, rather than each doing their Nasal/C++/Nasal context switches for each extension function call.

Richard has worked out a generic scheme to accomplish exactly that (see his fgdata commits to /Nasal).

In the mid-term, it will make sense to have the discussion if, and how, to specify lists of relevant properties for each canvas - which would include output and input properties. At that point, the Canvas system itself could traverse that list at the CanvasMgr level, and provide each canvas texture with a copy of fresh state, without unnecessarily causing property tree "traffic".

At that point, the setup would resemble the "instant replay/flight recorder" subsystem - because that, too, is using a configuration scheme to encode relevant I/O properties (per aircraft).

The thing is, once you have this sort of info PER INSTRUMENT (per canvas), you can trivially use a dedicated SGPropertyNode per Canvas texture, and then also hook up a dedicated FGNasalSys instance to the Canvas texture in question.

With this sort of setup, you'll then end up with an off-screen RTT/FBO context that can be asynchronously updated in the background, without having to run inside the main loop - you would even have to tell OSG to only run it at ~30 hz, because it could easily run at 100+ hz otherwise, i.e. updating a texture unnecessarily.

The kind of coding to populate/update and render such a canvas texture would be a little different compared to what people are currently doing, but it would be well worth it - because you could literally have 10+ canvas textures computed/updated and generated outside the main loop, with the only required synchronization point being the list of subscriptions (properties, and FlightGear APIs) - and obviously the final stage where some locking will be required to fetch the generatede Canvas FBO from the OSG worker thread.

And this, too, could be facilitated by some work that Richard has shared with the community, namely "Emesary" - which he is already using to hook up a threaded garbage collector to Nasal/FlightGear.

Admittedly, all of this may seem a little roundabout and maybe even complex - but short of using a simple "worker thread" setup, threading Nasal code is unlikely to work due to the sheer number of architectural restrictions in FlightGear itself.

Fixing up the Canvas system to support an optional mode where the property tree is a private one, not shared with the main fgfs tree, is comparatively straightforward however - right now, Nasal itself is integrated in the form of a singleton, so that would need to change - but that's under way already, due to unit testing work that bugman has been working on recently.

More recently, Jules has been working on CompositeViewer support, which is another promising option - because compositeviewer means that a completely independent scene graph can be easily processed and shown, without cluttering up the main loop.

Motivation

| once it [Canvas] is in simgear It should be really multi-viewer/threading capable. Everything that is not, might be changed at some time to match this criterion.

Such a change often comes with changes in the behavior that are not strictly needed but where people started relying on at some time. So better think about that at the first time. — Mathias Fröhlich (2012-10-22). Re: [Flightgear-devel] Canvas reuse/restructuring.

(powered by Instant-Cquotes) |

Background

This is a collection of ideas, discussions and patches with the goal of moving certain types of Canvas code out of the main loop into a dedicated background thread/process.

Flightgear does uses multiple threads, Nasal scripting is not run in one of those however - for the reasons that Thorsten outlined. It is trivial to run Nasal in another thread, and even to thread out algorithms using Nasal. Nasal itself was designed with thread-safety in mind, by an enormously talented software engineer with a massive track record doing this kind of thing (background in embedded engineering at the time). FlightGear however was never "designed" like Thorsten alluded to, rather its architecture "happened" by dozens of people over the course of almost 2 decades meanwhile.

The bottleneck when it comes to threading in Nasal is indeed FlightGear, the very instant you access any non-native Nasal APIs, i.e. anything that is FlightGear specific (property tree, extension functions, fgcommands, canvas) - the whole thing is no longer easy to make work correctly, without re-architecting the corresponding component (think Canvas).

In the case of Canvas, it would be relatively straight-forward to do just that, by introducing a new canvas mode, where each canvas (texture) gets its own private property tree node (SGPropertyNode) that is part of simgear::canvas, at that point, you can also add a dedicated FGNasalSys instance to each canvas texture (Nasal interpreter), and that could be threaded out using either Nasal's threading support or using simgear's SGThread API. Or by using OpenSceneGraph's helpers for that.

Obviously, there would remain synchronization points, where this "canvas process" (thread) would fetch data from FlightGear (properties) and also send back its output to FlightGear (aka the final texture).

Other than that, it really is surprisingly straightforward to come up with a thread-safe version of the Canvas system by making these two major changes - the FGNasalSys interpreter would then no longer have access to the global namespace or any of the standard extension functions, it could only manipulate its own canvas property tree - all I/O between the canvas texture thread (Nasal) and the main loop (thread) would have to take place using a well defined I/O mechanism, in its simplest form a simple network protocol (even telnet/props or Torsten's AJAX/mongoose layer would work "as is") - more likely, this would evolve into something like Richard's Emesary system.

Like Thorsten said already, you cannot "simply" thread out "all nasal" without either changing all existing Nasal code or without re-architecting FlightGear along the way.

Based on my own understanding of FlightGear, its main loop and the scripting layer, the most promising way forward would indeed be to tinker with a new addon-mode where scripts could be run inside such a sandboxed environment, using a background thread. This would be akin to firefox web extensions, that basically hit the same restrictions because of the proliferation of javascript in browsers - so, this kind of model has been demonstrated to work: one background thread for the work, and main loop scripts for the interaction with the rest of the environment.

This kind of thing can be worked on without breaking things, and it is largely facilitated by bugman's unit testing work, i.e. being able to start independent instances of the Nasal interpreter and test these outside the sim.

Once you are able to do just that, you can also easily take FGNasalSys and come up with a stripped-down version to remove all the stuff that makes such an instance thread-unsafe, and re-add what's useful later on. Probably, using some kind of RPC/IPC mechanism - socket I/O for starters should do.

The very moment you see bugman making reports about testing Nasal standalone in conjunction with certain FG APIs, all the building blocks will be in place. A new addon mode/version could be added to support threaded addons, which is a no-brainer to do, because it cannot break anything, since we don't have any threaded addons yet. And at that point, it would also be trivial to tinker with a new canvas mode, that has its own private property tree and its own private Nasal instance.

This is a really low-hanging fruit to be honest, and it's straightforward path to provide FlightGear with better threading support, so that anything involving new Nasal work, can be made to live inside separate threads, i.e. using such addons or canvas textures that are updated asynchronously, and which are only synchronized at certain time steps.

In addition, from a canvas standpoint this would provide for an excellent mechanism to bring unit testing to canvas-based avionics, because those can then trivially be executed outside the main loop, so that we could even run a batch job on the build server to create screen shots of avionics (say a PFD or ND) purely based on hooking them up to a pre-recorded flight or some other stored state vector containing all the properties/data.

Just running "all of Nasal" outside the main loop is going to be much more work, than being smart about it, and by preparing the hooks to thread out the interesting stuff, and provide an infrastructure to port/implement new features in the future.

Such a modified/modernized Canvas system would then contain its own private property tree for each instance and its own scripting interpreter (context), which would mean that it could even be compiled into a standalone executable, and even be executed in a headless fashion:

Problem

Originally, the whole Canvas idea started out as a property-driven 2D drawing system, but admittedly, what we ended up with is a system that is meanwhile tightly coupled to Nasal unfortunately. Indeed, there are some things where you definitely need to use Nasal to set up/initialize things. But under the hood, 99% still is pure property I/O, which is also why the property tree is becoming a bottleneck.

In general, Nasal is not the problem here - but the way the Canvas system is designed, and the way both, Nasal and Canvas, are integrated - it's a single-threaded setup, i.e. we are inevitably adding framerate-limited scripted code that runs at <= 60 hz to the main loop, to update rendering related state. This is a bit problematic, but it's not a real problem to fix.

It would be a problem to fix up existing Canvas-code (think NavDisplay, PFDs, EICAS etc), but with a few minor tweaks, we could come up with a dedicated Canvas mode where scripts updating a canvas property tree, are running out of the main loop. This would mean that they could not access any of the mainloop-APIs, but apart from that, it's actually a no-brainer, i.e. a straigthforward thing to do.

Out of the box, OSG comes with support for creating and updating textures asynchronously, we just aren't using this currently - for obvious reasons, coding such a Canvas texture, would be a different thing. But the hooks required to make this happen, are fairly straigthforward.

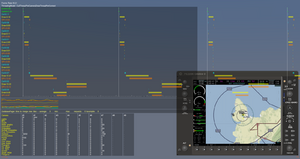

We have more and more aircraft that feature comparatively complex avionics, implemented on top of the Canvas stack via Nasal.

Depending on the number of simulated displays/avionics, there is a fair share of property I/O going on, including a fair amount of redundant I/O, because many avionics/display instances share certain I/O requirements (think access to /position, /orientation etc.)

Many modern aircraft will feature between 6-8 Canvas-based MFDs that may be shown/updated at the same time.

For the time being, the free-form nature of Canvas/Nasal based avionics means that most avionics don't use any dedicated frameworks or standard patterns to formalize if/how and when crucial state is updated.

This includes property tree state, as well as other state retrieved via FlightGear extension functions (think Nasal/cppbind).

Thus, a number of complex cockpits have been demonstrated to be affected by the number of Nasal/Canvas based displays. Often, this is due to the structure of existing legacy code.

Goal

This article is intended to provide a comprehensive summary of the various discussions and proposals we have seen in the context of adapting the Canvas system to come up with a new execution mode/model, with the ultimate goal of improving run-time performance - which may include, but isn't restricted to, optionally moving certain aspects of a Canvas-based display out of the main loop into dedicated background threads.

Furthermore, the goal is come up with an execution model that is backwards compatible, and strictly "opt-in" for any functionality that cannot be provided in a safe fashion.

Canvas Architecture

The Canvas system is primarily implemented in C++, it's a listener based subsystem that watches the global property tree for relevant updates/changes, specifically accesses to /canvas are monitored.

Under the hood, each Canvas is implemented as an owner-drawn gauge (OD_Gauge), canvas textures are positioned in the scene using a texture visitor (OSG), replacing static textures as needed.

Each Canvas texture is then composed of so called "elements", the lowest-level element being the "group" which is primarily used to logically structure/organize a texture into a hierarchy of lower-level building blocks. Therefore, each Canvas texture always has a "root" node, which is a group.

In turn, each group may consist of specific "element" implementations, i.e. to render certain types of context, such as:

- text

- paths

- raster images

(and any combination of these)

In addition, there are higher level helpers implemented in scripting space, e.g. a "window" class implemented on top of the image element. Or support for SVG graphics, implemented on top of the OpenVG based path handling support. Also, there is a special group type to handle specifically geographic projections, for mapping/charting purposes.

each Canvas has a handful of well-defined property names (and types) that it is watching to handle "events" - think stuff like changing the size/view port etc. And then there is a single top-level root group, which serves as the top-level element to keep other Canvas elements.

A Canvas element is nothing more than a rendering primitive that the Canvas system can handle - e.g. stuff like a raster image can be added to a Canvas group, a text string/font, and 2D drawing primitives in the form of OpenVG instrutions mapped to ShivaVG. And that's basically about it (with a few exceptions that handle use-case specific stuff like 2D mapping/charts).

Apart from that, the main thing to keep in mind is that a Canvas is really just a FBO - i.e. an invisible RTT context - to become actually visible, you need to add a so called "placement" - this tells the rendering engine to look up a certain canvas and add it to the scene/cockpit or the GUI (dialogs/windows).

So far, all of this is handled using native code that watches the global /canvas tree in the property tree - there is a canvas manager that handles events and passes them onto the corresponding canvas instance and its child elements.

Realistically, all Canvas textures are however instantiated/updated using scripting space hooks that end up writing to the corresponding properties in the global property tree, this makes it much easier to manipulate a canvas/element, because you don't need to do any low-level getprop/setprop stuff, but can directly use an element specific API.

A FlightGear Canvas is primarily a property tree in the main property tree, where attributes of the texture, and each element, are mapped to "listeners" (or updated via polling).

Internally, this will dispatch events/notifications to the current texture/event.

A Canvas in texture is an invisible offscreen rendering context (RTT/FBO) - it is made visible by adding a so called "placement" to the main FlightGear scenegraph, where the static texture will be replaced with one of the dynamic Canvas textures.

A FlightGear Canvas supports events for UI purposes, so that listeners can be registered for events like "mouseover" etc.

The Canvas scenegraph is a special thing, its root is always a Canvas group - each group can have an arbitrary number of children added, i.e. other elements (or other groups).

The primary Canvas elements are 1) raster images, 2) osgText nodes, 3) map, 4) groups and 5) OpenVG paths.

The FlightGear Canvas system does not understand SVG images - instead, it is using the OpenVG back-end to translate a subset of SVG/XML to Canvas properties by mapping those to OpenVG primitives.

There are many features that are not supported by this SVG parser (svg.nas), but it is written in Nasal and can be easily extended to also support other features, e.g. support for raster images and/or nested SVG images.

Approach

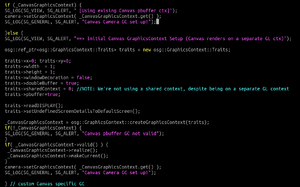

One starting point would be changing the assumption that all canvas texture PROPERTIES live in the global property tree, instead each Canvas texture would get its own SGPropertyNode root, which isn't accessible from anywhere else.

At that point, you have a Canvas/OD_Gauge context that can be updated by changing said PRIVATE property tree. As long as this property tree is only ever updated from single place (thread), multi-threading things becomes possible, because you only need to serialize access whenever you want fetch/display the updated texture. But apart from that, the update/redraw mechanism could be running in a background thread.

From a Canvas perspective, one obvious issue is dealing with Canvas textures that fetch data/imagery from other textures, because that, too, would require synchronization.

But other than that, you would end up with a Canvas system whose textures can be asynchronously updated by a background thread, scripts doing so would look a bit different, because they would lack access to 90% of the common FG APIs (think geodinfo and friends), because those cannot be considered to be thread-safe.

As you can probably tell, this is something that we once discussed behind the scenes - and it would nicely align with the original idea of "remote properties", i.e. sync'ing and replicating properties between property trees from different threads/processes, the main thing needed to do this is a subscribe/publish mechanism that works over sockets (or some other IPC): http://wiki.flightgear.org/Remote_Properties

This is something where Richard's Emesary work could become highly useful, because the cost of adapting the Canvas system to optionally support an out-of-mainloop mode would be marginal - further, bugman's ongoing work on unit-testing and unit-testing Nasal in particular, should come in very handy, because it would become much easier to start up dedicated FGNasalSys instances (our in-sim Nasal interpreter) that may not run inside the main loop, i.e. lacking most standard FG APIs.

Now, when it comes to using Canvas without Nasal, that's actually a valid use-case, and I find it important to keep that use-case in mind, because over time, we've seen more and more attempts at coming up with frameworks, that basically shield back-end code from changes to front-end code (and vice versa), this is why it is important to primarily work through the property tree, and not rely on dedicated Nasal bindings (cppbind).

It would be a good thing to keep this in mind, because doing so means that multi-instance setups supporting Canvas would become much easier, i.e. there is no problem using Nasal at all, as long as it happens through well-defined interfaces that basically hide the scripting aspect.

Furthermore, a number of core devs have been thinking about using the Canvas system for scenery-related runtime-drawing, which would also require Canvas to become thread-safe, i.e. using a dedicated/private property tree instance to isolate all access to the property tree that is used to update/redraw such textures, which would mean that anything involving OSM2City, photo-scenery, but even random buildings, could be enormously boosted by making the Canvas system available accordingly

threading out all of Nasal is not trivial at all - however, modifying a handful of subsystems to allow future features to run outside the main loop, would be relatively self-contained task.

If you have ever done any C++ programming for FlightGear, you realize that there is a thing called the global property tree, and that there is a single global scripting interpreter. The bottleneck when it comes to Nasal and Canvas is unnecessary, because the property tree merely serves as an encapsulation mechanism, i.e. strictly speaking, we're abusing the FlightGear property tree to use listeners that are mapped to events, which in turn are mapped to lower-level OSG/OpenGL calls - which is to say, this bottleneck would not exist, if a different property tree instance were used.

This, in turn, is easy to change - because during the creation of each canvas, the global property tree _root is set, which could also be a private tree instead. Quite literally, this means changing 5 lines of C++ code to use an instance-specific SGPropertyNode_ptr instead of the global one.

At that point, you have a canvas that is inaccessible from the main thread (which sounds dumb, but once you think about it, that's exactly the point). So, the next step is to provide this canvas instance with a way to access its property tree, which boils down to adding a FGNasalSys instance to each Canvas - that way, each canvas texture would get its own instance of SGPropertyNode + FGNasalSys

Anybody who's ever done any avionics coding will quickly realize that you still need a way to fetch properties from the main loop (think /fdm, /position, /orientation) but that's really easy to do using the existing infrastructure, you could really use any of the existing I/O protocols (think Torsten's ajax stuff), and you'd end up with Nasal/Canvas running outside the main loop.

The final step is obviously making the updated texture available to the main loop, but other than that, it's much easier to fix up the current infrastructure than fixing up all the legacy code

telling the canvas system to use another property tree (SGPropertyNode instance) is really straightforward - but at that point, it's no longer accessible to the rest of the sim. You can easily try it for yourself, and just add a "text" element to that private canvas. The interesting part is making that show up again (i.e. via placements). Once you are able to tell a placement to use such a private property tree, you can use synchronize access by using a separate thread for each canvas texture (property tree). But again, it would be a static property tree until you provide /some/ access to it - so that it can be modified at runtime, and given what we have already, hooking up FGNasalSys is the most convenient method. But all of the canvas bindings/APIs we have already would need to be reviewed to get rid of the hard-coded assumption that there is only a single canvas tree in use.

Like you said, changing fgfs to operate on a hidden/private property tree is the easy part, interacting with that property tree is the interesting part.

Also, it would be a very different way of coding, we would need to use some kind of dedicated scheduling mechanism, or such background threads might "busy wait" unnecessarily.

If you know how to build sg/fg from source (git) and how to apply patches, I can provide the corresponding pointers to get you started experimenting with such an adapted Canvas system, we experimented with it a couple of years ago, and there should still be patches somewhere on the forum or the wiki.

Speaking in general, once each Canvas texture uses its own, private, property-tree instance, it would be fairly straightforward to also add private instances of a Nasal interpreter and a "property rules" system to each canvas - these are existing features in simgear/flightgear, once you add SGSocket-based connectivity and Richard's Emesary system, you have a 2D drawing system that can run asynchronously, out of the main loop, and you don't even have to use Nasal, but can use property rules (or JSBSim systems) to create/update OpenGL textures.

Again, like I said, it's not difficult to add a SGPropertyNode instance to each Canvas texture, but it paves the way for a future where a Canvas texture and the logic creating/updating it, can live (run) outside the main loop, with the main loop only fetching the final image, or possibly streaming it from another process (for which we already have working code, thanks to ThomasS).

Torsten's autopilot/property rules code is highly functional and it could be easily extended to support event handling constructs, so that we can do away with Nasal based timers and listeners to update a Canvas. Richard's Emesary system is a very neat mechanism to establish the coding protocols to do message-based programming, and once you have these systems hooked up to a single private property tree instance, you can literally have your cake and eat it. Most of this is really code we already have, it's just not yet integrated. But once that is tackled by someone, you also end up with a Canvas system that can boost development of anything involving autogen-scenery like osm2city, think random buildings and osm2city integrated via the Canvas system.

But as can be seen, many areas in FlightGear could enormously benefit by adapting the Canvas system to OPTIONALLY support per-texture property trees that are conceptually private, so each canvas texture can have its own scripting interpreter instance, property rules and timers/events (listeners).

The key efforts here are really bugman's work on unit testing and Richard's work on Emesary - using their groundwork, sooner or later, someone will be brave enough to come up with a new canvas mode that can run asynchronous Nasal scripts to create and update a texture , while those scripts will look very different, and probably even intimidate people new to message-based coding and multi-threading, they will come with the benefit of not cluttering the main loop, i.e. providing stable frame rates and frame spacing.

References

References

|