Shiva Alternatives

|

|

| This article is a stub. You can help the wiki by expanding it. |

| The FlightGear forum has a subforum related to: Canvas |

| |

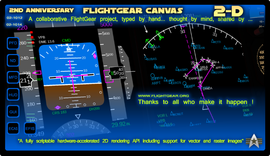

| Started in | 02/2022 (Available on next) |

|---|---|

| Description | Canvas.Path being ported for Core Profile support |

| Contributor(s) | Erik, Scott, Fahim, Stuart, James (see references below) |

| Status | Experimental (in the process of being integrated) |

| Changelog | see commit Add ShaderVG, a shader based version of ShivaVG |

To support Canvas on OpenGL Core profile, the plan is to migrate the Canvas Path backend from Shiva to ‘something else’ which implements the required drawing operations. Unfortunately two of the probable solutions (Skia from Chrome and Cairo from Gtk) are both enormous and a pain to deploy on macOS and Windows.

The issue is mostly how the different libraries handle clipping. The problem is that for a non-rotated (or strictly, a 90-degree rotated) rectangular clip, you can use glSicssor to clip, which is ‘free’. For arbitrary shaped clips, or where the clip is transformed in some awkward way, the options are

- stencils (which I think is what ‘old’ Shiva uses)

- intermediate framebuffers

- geometric clipping but that’s a royal pain in the ass to combine with gradients / dash patterns

BTW this is why you should be super careful about using clips in a canvas: rectangular ones which are only translated / scaled are very efficient (due to glSicssor), but anything else can bite hard, especially on systems where the chosen solution (again, eg stencils) is not a common/fast path.[1]

There is the separate issue of Canvas being a little slow for some people, but that’s a separate task which we need to work on, and will change when we switch from Shiva to something more modern (as of 03/2023, #ShaderVG seems like the most likely candidate, for details see below). [2]

At the moment there are 4 version of ShivaVG/ShaderVG in SimGear.

In next there is the ancient version of ShivaVG (probably OpenGL compatibility profile) and ShaderVG which is based on the ancient version of ShivaVG but adds shaders for rendering paths and color maps.

In the topics/shivavg_update branch there is an updated version of ShivaVG which requires OpenGL2.1 (and therefore I think leaves the OpenGL compatibility profile behind) and a version of ShaderVG where the changes between ShivaVG and ShaderVG have been applied on top of the new ShivaVG.

For the time being, the first thing to check, maybe, is to see whether the version of ShivaVG in the topics/shivavg_update branch is enough to get the core profile working. I suspect it might.[3]

Status

- September, 28th 2023, Fernando is sharing very promising progress updates relating to Core Profile migration [4]

- April 15th, 2023, Scott is hoping to investigate remaining issues [5] He took a closer look at the latest ShivaVG and confirmed that it is compatibility profile, not core profile. He also thinks, it's best to discard this as a viable option based on our goals toward core profile and Scott said, he'll start to explore ShaderVG, which he believes is a good path forward.[6]

- April 13th, 2023: the non-shader code paths are now working correctly, but as soon as one or both of the shaders are used things get whacky,and I suspect it has something to do with what used to be the ModelView matrix which don't match between OpenSceneGraph and the shaders. [7]

- March 8th, 2023: Erik seems to have got basic ShaderVG integration working with few remaining issues [8] FGData commit 6166483

- March 4th, 2023: Erik started adding custom shaders to FG for ShaderVG

- Feb 17th, 2022: Erik added ShaderVG to SimGear on next including a corresponding cmake build option

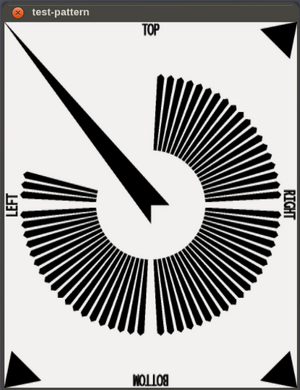

Testing

| Caution any changes we make to Canvas rendering, need to be strict about compatibility: at least to pixel level, ideally even sub-pixel (but sub-pixel might be hard to achieve). Therefore we should really understand any differences, before touching any aircraft, and for sure check the FG-1000, the A320 displays, Henning’s CRJ and have someone check the shuttle.[9] |

| The FlightGear forum has a subforum related to: Canvas |

bugman once came up with the idea to take screen shots and use those to do automated unit-testing for these things, he discussed that idea in detail with James and Stuart on the list back then, including specifically the idea to do "Headless rendering that can be captured as a bitmap"[10].

The idea back then was to render frames to bitmaps on disk or in memory for per-pixel checks for regression testing purposes.[11]

Edward stated: we will need to implement a true "headless" mode. Querying the scene graph, including performing scene graph dumps, could be useful for quite a number of tests. And, probably also of interest especially for debugging graphics card driver issues, switching to offline rendering to dump to a bitmap and check individual pixels [12]

Referring to the Canvas specifically, it is trivial to add a screen shot handler, to take canvas-specific screen shots and dump those to disk to run a binary diff (or do so in memory): Canvas_troubleshooting#Serializing_a_Canvas_to_disk_.28as_raster_image.29

It might even be possible to add a new Canvas.Path2 element, to run shiva and shadervg in the same fgfs executable and execute those tests side-by-side that way. [13]

In the mid-term, the idea being to accept a boolean flag at the Canvas level (for each canvas) so that we can decide which back-end to use. At that point, it would be trivial run existing Canvas code with both back-ends within the same binary, each rendering to a separate canvas FBO.

And then, all that we'd need to do is to use something like memcmp() to compare two Canvas FBOs/textures side-by-side.

We could expose the equivalent of a compare-canvas fgcommand that takes the SGPropertNode/path of two canvas textures and runs then memcmp() in a for-loop for each pixel.

If this sort of functionality could be added to next, it would enable early-adopters/core devs and fgdata committers to get involved in testing/troubleshooting, without breaking next/fgfs for users not wanting to tinker with ShaderVG at this point.

Later on, we could either expose ShaderVG via new dedicated Canvas.Path2 namespace (totally new and separate), and phase out Canvas.Path over time.

Or, if the port works well enough, support a custom uint "version number" for sc::Element, so that people can easily adopt the new version by setting the required version to "2" for their Canvas.Path instance (with 1 one being the current default).

This could go a long way to help people get involved in testing and troubleshooting, without having to be C++ developers, also all the deployment would be handled automatically.

And a corresponding fgcommand (or cppbind wrapper) to do a pixel/bit-wise comparison of two Canvas textures would seem like a straightforward thing to implement, if we can figure out the other parts.

To be really on the safe side, we could even implement unit tests in Nasal space for each OpenVG primitive and do a comparison of both textures after each instruction (optionally).

One simple test case would be to use SVG files (which are internally converted to OpenVG instructions via svg.nas) and then parse/load those to compare the functionality of both back-ends, while diff'ing the two resulting Canvas FBOs pixel by pixel.

If things are too difficult to port properly, introducing a dedicated Canvas.Path2 namespace while phasing out Canvas.Path, would seem like a straightforward option to help migrate to Core Profile, without having people complain that Canvas.Path (Shiva) no longer works - because there would be no guarantee it actually does for people using the new namespace...

Another option would be adding a new boolean property to each Canvas for an optional "shadow" canvas, that way the CanvasMgr itself could run optionally update TWO textures in parallel, one using the original shiva back-end, and the other one using the new ShaderVG based back-end, this sort of infrastructure should also come in very handy if/when other Canvas elements may need to be updated in the time to come.

Basically, if a "enable-canvas-tracking" property is set up, another ODGauge FBO would be set up, which would receive updates by the new sc::element/back-end. This could optionally happen per frame (costly), or at a configurable interval, or even per OpenVG instruction.

Headless

More generally, Edward stated that this is an area that he had in mind for the test suite. However the basic infrastructure of starting up a minimal headless scene graph rendering to a buffer has not been implemented yet.

So, someone needs to look at OSG and work out how to set up headless rendering in a way that you can capture a bitmap of the scene. The rest is then a piece of cake.

He suggested talking to James about this, as he might be able to quickly implement a simple headless infrastructure and be able to capture the issue. This original Canvas Path issue looks like the perfect excuse to implement this test suite infrastructure that, in the future, will be immeasurably useful!

Besides, the previous headless code is not so much use. For the test suite you probably only need a one line code change to render to a 'pbuffer' in OSG, and an additional option to render to a texture. What is relevant is a short function starting up the minimal set of subsystems (and currently non-subsystem elements) to render a basic fgfs scene graph. And maybe a second function to set up what is required to capture this issue. The key is that the main bootstrap routine used in fgfs is not used in the test suite - but Fred's contribution assumes this full bootstrapped system up and running.

In the test suite we don't want that full system, but an absolute minimum set of subsystems and other code running. There will be a set of helper functions (in test_suite/FGTestApi/) to set up different OSG components for different tests. The complication is that most subsystems will segfault if they are not in normal bootstrap position - you are already well aware of their brittleness. Another complication is that certain elements are not subsystems, e.g. FGSky. So the difficulty is not the headless part but simply setting up the minimal subset, fixing up all the segfaulting.

ShaderVG

Caution ShaderVG support is quite experimental at this stage. If you do not want to pull the latest changes, you can also try to compile simgear explicitly with USE_SHADERVG=OFFduring the cmake step to ensure it's disabled.[14] |

Status: As of 03/2022, Erik added ShaderVG next to ShivaVG in SimGear next for testing (SimGear commit 6451e50). The local patches applied to ShivaVG for FlightGear have also been applied to ShaderVG where appropriate, but it's not yet fully functional.[17]

To get involved in testing, use the

Cmake option: USE_SHADERVG

Refer to related commits for details, see SimGear commit 074ba34.

In 03/2023, Erik shared an update stating that he's now got the root of the problem: In VGInitOperation.cxx the size of the OpenGL viewport is passed to vgCreateContextSH which is always (0, 0, 800, 600) for some reason. ShaderVG takes this input as the maximum area to draw thereby ignoring the "view" property when creating a new canvas in Nasal. If he manually matches the parameters parsed to vgCreateContextSH with the canvas "view" parameters everything renders okay. One of the problems is that VGInitOperation is called from osg.

Now he needs to find out where that is done and how to make it retrieve Canvas::getViewport instead [18]

In April 2023, Erik mentioned a few remaining problems probably related to this: If I disable shader use in the code most of it seems to work properly.

But as soon as one or both of the shaders are used things get whacky, and I suspect it has something to do with what used to be the ModelView matrix which don't match between OpenSceneGraph and the shaders.

And to be honest, I don't know enough about both of them to fix this issue. So I would greatly appreciate it of someone with more knowledge on the subject could take a look.

The shaders have been moved from the code to FGSDATA/gui/shaders/ [19]

For the corresponding commits, refer to:

Erik's most recent update stating that he's got basic integration working now with few remaining issues.[20] For details, please refer to FGData commit 6166483 and SimGear commit 64be445.

An email on the devel-list early 2022 mentioned that nanoVG (see below) may not be super simple to drop in, so another idea was using ShaderVG which seems to follow exactly the same API as ShivaVG.[21]

Early experiments (patch [1]) in 02/2022 with ShaderVG were promising and suggest, that it ‘kind of sort of’ works, that’s already less work than integrating NanoVG.[22]

ShaderVG is a fork of a fork of the original ShivaVG which seems to not use the fixed OpenGL pipeline (well, at least it doesn’t have any glBegin/glEnd calls etc).

It uses glSicssor for clipping, that's fine. vgMaskImage is unimplemented but we could add it if anyone ever requested it.

The actual path filling / stroking uses stencil ops: this avoids the need to specify a custom framebuffer internally: of course Canvas is often rendering using an OSG FrameBuffer but that’s same today. (Maybe ‘old’ Shiva also uses the stencil ops the same way, also)

All gradient / pattern filling is handled using shaders, that’s very nice, should make using linear/radial gradients and patterns much better than existing Shiva. (This is same as NanoVG)[23]

Also, running a diff against ShivaVG from git, it turns out that FlightGear does have a number of local changes compared to a clean ShivaVG, among "setup GL projection" changed to "We handle viewport and projection ourselves" Something similar is probably required for ShaderVG.[24]

For the time being, it's not a one on one drop in replacement but it works quite nicely:

- For one the x,y positions are off comapared to ShivaVG making things slightly bigger (maybe too big for the screen).

- and one issue with the "title bar" being opaque.[25]

Possibly, ShaderVG is writing to a texture buffer on the graphics card, but for some reason a different texture buffer is being referenced by FG - in one case the building texture and in another a random bit of graphics card memory.[26]

Nanovg

Stuart wondered, whether we could use nanovg to create the texture from the vector data, as I think we already include it for Canvas?[27] Canvas uses Shiva VG. But there is a GPU implementation of it (called ShaderVG) lingering to get included after some bugs are ironed out.[28]

The original idea was to replace Shiva with this: https://github.com/inniyah/nanovg

.. which should be small enough to drop in directly, *and* we believe, supports the required Path rendering features which Canvas needs. (Eg, line thickness, dot/dash, clipping) Unlike Shiva, NanoVG can target Core-profile OpenGL, using its own shaders, and because they both aim to implement the same VG spec, should be close enough at the pixel level, to keep everything working. (Let’s see how that works in reality)

What could then be added to Canvas, is the option to use shaders when using a Canvas Image. Qt Quick calls this a ‘layer’ (like in Photoshop): basically when drawing this intermediate texture (which is what an Image or Canvas-in-a-Canvas is), add the option to use a custom shader in a very similar manner to an ALS filter. That would definitely be a nice enhancement to Canvas capabilities.[29]

CPU renderer

James has been recommending for some time, that it should be possible to render Canvas using CPU (not GPU) which for older machines could be a win for us. Even with us switching from ShivaVG to NanoVG for GPU accelerated drawing, there is some chance it’s still worth pursuing this.

(Basic idea is to use one of the mannnnnny software VG renderers, eg Cairo or something smaller : this would trade GPU time for CPU time, almost certain a bad idea on modern GPUs, but if the GPU is already the bottleneck, and CPU cores are idle, could potentially work well on older machines to make our use of resources more balanced)

It would be ‘some work’ but much less work than every aircraft dev duplicating their entire Canvas work. If someone is ever interested to try this, just ping James Turner, it’s quite a nice self-contained project.[30]