Canvas view camera element

| This article is a stub. You can help the wiki by expanding it. |

| |

| Started in | 08/2016 (prototyped by F-JJTH) |

|---|---|

| Description | adds support for rendering slave scenery views to a FBO/RTT (offscreen-texture) |

| Maintainer(s) | none |

| Contributor(s) | F-JJTH, Icecode GL, Hooray, cyrfer [1][2], Stuart (review [3]) |

| Status | experimental/known issues (being prepared for review/integration) |

Last updated in 09/2017

Summary

|

|

Several aircraft developers have manifested their interest in being able to render to a texture (RTT) Render target ![]() and use it inside cockpits as mirrors, external cameras (so called tail cams) and other uses. Effects and shaders developers have also reached a point where ignoring RTT is a waste of resources and a limitating factor when creating new effects. Although this Canvas element is directed mainly towards the first need, it is a first step forward in terms of finally exposing Render To Texture capabilities to non-C++ space too.

and use it inside cockpits as mirrors, external cameras (so called tail cams) and other uses. Effects and shaders developers have also reached a point where ignoring RTT is a waste of resources and a limitating factor when creating new effects. Although this Canvas element is directed mainly towards the first need, it is a first step forward in terms of finally exposing Render To Texture capabilities to non-C++ space too.

Use Cases

- Tail Cams

- Mirrors

- In-sim view configuration

- On demand creation of views and windows (e.g. FGCamera previews)

- Prototyping/testing HUDs or PFDs requiring synthetic terrain to work properly

Status

We have a basic prototype, provided by F-JJTH, who developed the whole thing in mid-2016. There are a few issues, and it's using the legacy approach for implementing OD_gauge based avionics, i.e. not yet using the Canvas system.

We also have a quick, but working, integration in the form of a custom Canvas element provided by Icecode GL. Originally, the image was mirrored because of different coordinate systems. Apparently the missing piece was to use FG's view manager, which is now fixed, thanks to Clement's contribution.

Who knows, with cleaner code and some tweaks we could have a deferred renderer and RTT capabilities in Canvas space, as long as the effect framework can access these RTT contexts, as Hooray proposed multiple times.[4]

Also, Stuart offered to help review any patches and get them committed. Please ping Stuart when you think the code is worth checking in. It doesn't have to be perfect - Particularly once the current release is out (2017.3). [5]

The Future

for now we are emphasizing in implementing camera views in Canvas. We also keep in mind that this might be an important groundwork for what might be RTT support for effects/shaders. For that we obviously need a link between Canvas and Effects. It's kind of a good thing that both systems are completely separated and don't know of each other, we are given more freedom when it comes to joining them. This link is kind of delicate and has to be well planned out.[6]

Shaders can recieve many input textures and modern shaders support MRT (Multiple Render Targets) too. It makes sense to come up with some kind of well defined link between Canvas and Effects that allows for inputting/outputting canvases via effects. This would allow the same things that per-element effects would allow but with more flexibility and less overlapping functionality. [7]

it would be possible to also come up with an XML-configurable rendering pipeline - in fact, Zan once came up with a modified CameraGroup design that would move the whole hard-coded pipeline to XML space: Canvas Development#Supporting Cameras[8]

Ideally, something like this would be integrated with the existing view manager, i.e. using the same property names (via property objects), and then hooked up to CanvasImage, e.g. as a custom camera:// protocol (we already support canvas:// and http(s)://) So some kind of dedicated CanvasCamera element would make sense, possibly inheriting from CanvasImage.

And it would also make sense to look at Zan's new-cameras patches, because those add tons of features to CameraGroup.cxx This would already allow arbitrary views slaved to the main view (camera)

As has been said previously, the proper way to support "cameras" via Canvas is using CompositeViewer, which does require a re-architecting of several parts of FG: CompositeViewer Support Given the current state of things, that seems at least another 3-4 release cycles away. So, short of that, the only thing that we can currently support with reasonable effort is "slaved views" (as per $FG_ROOT/Docs/README.multiscreen). That would not require too much in terms of coding, because the code is already there - in fact, CameraGroup.cxx already contains a RTT/FBO (render-to-texture) implementation that renders slaved views to an offscreen context. This is also how Rembrandt buffers are set up behind the scenes. So basically, the code is there, it would need to be extracted/genralied and turned into a CanvasElement, and possibly integrated with the existing view manager code. And then, there also is Zan's newcameras branch, which exposes rendering stages (passes) to XML/property tree space, so that individual stages are made accessible to shaders/effects. Thus, most of the code is there, it is mainly a matter of integrating things, i.e. that would require someone able to build SG/FG from source, familiar with C++ and willing/able to work through some OSG tutorials/docs to make this work[10]

Canvas is/was primarily about exposing 2D rendering to fgdata space, so that fgdata developers could incorporatedevelop and maintain 2D rendering related features without having to be core developers (core development being an obvious bottleneck, as well as having significant barrier to entry). In other words, people would need to be convinced that they want to let Canvas evolve beyond the 2D use-case, i.e. by allowing effects/shaders per element, but also to let Cameras be created/controlled easily. Personally, I do believe that this is a worthwhile thing to aim for, as it would help unify (and simplify) most RTT/FBO handling in SG/FG, and make this available to people like Thorsten who have a track record of doing really fancy, unprecedented stuff, with this flexibility. Equally, there are tons of use-cases where aircraft/scenery developers may want to set up custom cameras (A380 tail cam, space shuttle) and render those to an offscreen texture (e.g. GUI dialog and/or MFD screen). It is true that "slaved views" are kinda limited at the moment, but they are also comparatively easy to set up, so I think that supporting slaved camera views via Canvas could be a good way to bootstrap/boost this development and pave the way for CompositeViewer adoption/integration in the future. [11]

Gallery

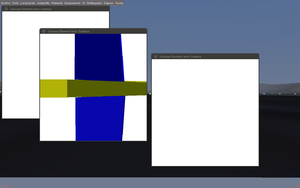

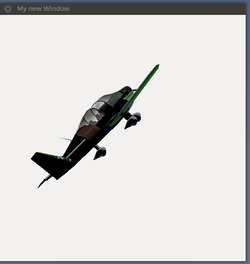

An experimental Canvas element to render slaved scenery views to a custom Canvas texture, to be used for creating custom tail-cams/mirror textures and so on |

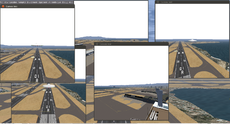

Canvas gui dialog with a custom canvas element to display view manager views (based on code prototyped by F-JJTH) |

Getting involved

Icecode GL has been quite interested in this subject lately. [...] For shader support it'd use the current effects framework, and to display stuff on the screen it'd either use Canvas or a OSG window directly.If you are interested feel free to contact him so he can share with you some code and pointers/ideas that he's been collecting along the way. I guess that'd be better than starting from zero.[12]

The Canvas system is currently constrained to being 2D specific, i.e. to be useful for any of the "3d scene" stuff you're interested in, you'd need to read up on adding new/custom elements - e.g. those useful for 3d stuff, and effects/shaders specifically.As Icecode GL mentioned, this is something that he's been tinkering with lately. Like he also mentioned, based on Zan's groundwork (newcameras), we are looking at exposing a similar degree of flexibility via the Canvas system by introducing new elements and/or additional "modes".For starters, this will probably only involve a new view-manager based Canvas view to render a slave scenery view to a Canvas texture. The next incarnation may include effects/shader support to customie such a slave view.Besides, it would also be possible to render a totally independent scene/osg::Node - which is something that we once prototyped to load 3D models from disk and rotate/transform those using an osg::PositionAttitudeTransformMatrix Howto:Extending Canvas to support rendering 3D models

Implementation details / for the reviewer

| Note This section provides a rough overview for the potentiaal reviewer, which is also intended to be used as the commit message when/if this should get committed |

This set of patches (touching SimGear and fgdata) implements a new Canvas::Element by creating a sub-class named Canvas::View. The meat of it is in the constructor, i.e. Canvas::View::View(), where an off-screen camera (RTT/FBO) is set up, the FGCanvasSystemAdapter file has been extended to provide access to the FlightGear view manager to compute/obtain the view-specific view matrix, which is then used by this new canvas view element to update the offscreen camera in Canvas::View::update() accordingly.

BTW: This is also a good way to stress-test the renderer, as new cameras can be easily added to the scene at runtime, so that the impact of doing so can be easily measured.

the patch is experimental, it will basically look up a view and dynamically add a slave camera to the renderer that renders the whole thing to a Canvas, a Canvas is a fancy word for a RTT/FBO context in FlightGear that can be updated by using a property-based API built on top of the property tree in the form of events/signals that are represented via listeners.Which is to say each Canvas has a handful of well-defined property names (and types) that it is watching to handle "events" - think stuff like changing the sie/view port etc. And then there is a single top-level root group, which serves as the top-level element to keep other Canvas elements.A Canvas element is nothing more than a rendering primitive that the Canvas system can handle - e.g. stuff like a raster image can be added to a Canvas group, a text string/font, and 2D drawing primitives in the form of OpenVG instrutions mapped to ShivaVG. And that's basically about it (with a few exceptions that handle use-case specific stuff like 2D mapping/charts).Apart from that, the main thing to keep in mind is that a Canvas is really just a FBO - i.e. an invisible RTT context - to become actually visible, you need to add a so called "placement" - this tells the rendering engine to look up a certain canvas and add it to the scene/cockpit or the GUI (dialogs/windows).So far, all of this is handled using native code that watches the global /canvas tree in the property tree - there is a canvas manager that handles events and passes them onto the corresponding canvas instance and its child elements.Realistically, all Canvas textures are however instantiated/updated using scripting space hooks that end up writing to the corresponding properties in the global property tree, this makes it much easier to manipulate a canvas/element, because you don't need to do any low-level getprop/setprop stuff, but can directly use an element specific API.[14]

To actually test the new element using the Nasal Console, paste the following snippet of code into it:

var (width,height) = (512,512);

var ELEMENT_NAME = "viewcam";

var display_view = func(view=0) {

var title = 'Canvas test:' ~ ELEMENT_NAME;

var window = canvas.Window.new([width,height],"dialog").set('title',title);

var myCanvas = window.createCanvas().set("background", canvas.style.getColor("bg_color"));

var root = myCanvas.createGroup();

var child = root.createChild( ELEMENT_NAME );

# child.set("view-number", view);

} # display_view()

var totalViews = props.getNode("sim").getChildren("view");

forindex(var v;totalViews) {

display_view(view: v);

}Aircraft developers can use the new Canvas view element simply by using the conventional approach to replace a static texture with a Canvas using a cockpit placement.

Roadmap

| Note There is a related patch available at https://forum.flightgear.org/viewtopic.php?f=71&t=23929#p317448 |

- extend FGCanvasSystemAdapter to make the view manager available there [15]

- come up with a new Canvas element inheriting from Canvas::Image

- add Clement's camera setup routines

- make the camera render into the texture used by the sub-class

- this should be named viewmgr-camera or something like that to make it obvious what it is doing

Testing

Once we have a basic prototype working, the new camera element needs to be tested, specifically:

- using different resolutions (resizing at runtime?)

- displaying multiple/independent camera views

- all supported Canvas placements

- shaders and effects

- ALS

- Rembrandt

- Reset/re-init

- OSG threading modes

Known Issues

- texture/view matrix updates don't currently take effect unless .update() is specifically invoked

skydome handling (probably missing effect/shader or wrong root node) Done (by Icecode_GL)

Done (by Icecode_GL)- change DATA_VARIANCE for texture setup, analogous to the Canvas::Image::Image ctor

- make the size of the texture re-configurable using properties

Troubleshooting

- update the texture specific osg::StateSet to force an update of the texture

- compare the scenegraph generated for sc::View vs. sc::Image using http://wiki.flightgear.org/Canvas_Troubleshooting#Dumping_Canvas_scene_graphs_to_disk

- check whether sub-classing sc::Image and directly using its _texture makes any difference or not

- if it does, we could just as well add support for a custom

view://by-number/protocol to the src/filename handling helper

- if it does, we could just as well add support for a custom

- if all else fails, use an osg::Image and assign it to the texture and invoke its dirty() method to update the whole thing

Ideas

- do we need support for looking up view numbers by name ?

- support draw-masks for scene graph features (terrain, skydome, models etc): " it'd be benefitial in the long run to let the user choose what part of the scene graph they want to show. This would be useful for deferred rendering schemes, where sometimes you don't need to display the whole scenery to save some precious frames. Canvas makes that easy with all of its property handling"

- work out what is needed for synthetic terrain views [1]

- support effects/shaders: This is fairly straightforward to do, just create a EffectsGeode, fill it with a "compiled" .eff, and substitute the standard osg::Geode for it. But I don't think that's going to give us the flexibility we need. A shader won't be able to access several canvases at the same time if we do things that way.

- introduce some sort of STL cache using something like std::map<std::string, osg::Texture2D> to only ever update/draw a view once, no matter how often it is shown by different elements/placements ?

Performance / Optimizations

- Configurable refresh rate per view. 15 fps might be enough for a external camera, we don't need to draw at max rate.[16]

- provide a view-cache using a STL std::map<> ?

- use PBOs [2]

- consider hooking up the whole thing to the FGStatsManager (OSG stats) ?

- Torsten implemented some PagedLOD scheme to only render AI entities/objects if they're actually visible in terms of pixels on the screen [17] [18]

- check if CullThreadPerCameraDrawThreadPerContext works, see Howto:Activate multi core and multi GPU support

Base Package

Nasal Console

After patching and rebuilding SimGear/FlightGear respectively, and applying the changes to to api.nas in the base package, the following can be pasted into the Nasal Console for testing purposes:

# TODO: this must match the TYPE_NAME used by the C++ code

var ELEMENT_NAME ="view-camera"; # to be adapted according to the C++ changes

var myElementTest = {

##

# constructor

new: func( camera ) {

var m = { parents: [myElementTest] };

m.dlg = canvas.Window.new([camera.width,camera.height],"dialog");

m.canvas = m.dlg.createCanvas().setColorBackground(1,1,1,1);

m.root = m.canvas.createGroup();

##

# instantiate a new element

m.myElement = m.root.createChild( ELEMENT_NAME );

# set the view-number property

m.myElement.set("view-number", camera.view);

m.dlg.set("title", "view #"~camera.view); # TODO look up proper title/name

return m;

}, # new

}; # end of myElementTest

# TODO: get list of all views ?

var cameras = [

{view: 0, width : 640, height: 480},

{view: 1, width : 320, height: 160},

{view: 2, width : 320, height: 160},

];

foreach(var cam; cameras) {

var newCam = myElementTest.new( cam );

}