Howto:Processing d-tpp using Python: Difference between revisions

Jump to navigation

Jump to search

| Line 30: | Line 30: | ||

=== Scraping === | === Scraping === | ||

<syntaxhighlight lang="python"> | <syntaxhighlight lang="python"> | ||

import os | |||

import urlparse | |||

import scrapy | |||

from scrapy.crawler import CrawlerProcess | |||

from scrapy.http import Request | |||

ITEM_PIPELINES = {'scrapy.pipelines.files.FilesPipeline': 1} | |||

def createFolder(directory): | |||

try: | |||

if not os.path.exists(directory): | |||

os.makedirs(directory) | |||

except OSError: | |||

print ('Error: Creating directory. ' + directory) | |||

class dTPPSpider(scrapy.Spider): | |||

name = "pwc_tax" | |||

allowed_domains = ["155.178.201.160"] | |||

start_urls = ["http://155.178.201.160/d-tpp/1712/"] | |||

def parse(self, response): | |||

for href in response.css('a::attr(href)').extract(): | |||

yield Request( | |||

url=response.urljoin(href), | |||

callback=self.save_pdf | |||

) | |||

def save_pdf(self, response): | |||

path = response.url.split('/')[-1] | |||

self.logger.info('Saving PDF %s', path) | |||

with open('./PDF/'+path, 'wb') as f: | |||

f.write(response.body) | |||

process = CrawlerProcess({ | |||

'USER_AGENT': 'Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1)' | |||

}) | |||

createFolder('./PDF/') | |||

process.crawl(dTPPSpider) | |||

process.start() # the script will block here until the crawling is finished | |||

</syntaxhighlight> | </syntaxhighlight> | ||

Revision as of 17:18, 28 November 2017

| This article is a stub. You can help the wiki by expanding it. |

Motivation

Come up with the Python machinery to automatically download aviation charts and classify them for further processing/parsing (data extraction): http://155.178.201.160/d-tpp/

We will be downloading two different AIRAC cycles, i.e. at the time of writing 1712 & 1713:

Each directory contains a set of charts that will be post-processed by convering them to raster images.

Data sources

Chart Classification

- STARs - Standard Terminal Arrivals

- IAPs - Instrument Approach Procedures

- DPs - Departure Procedures

Modules

XML Processing

http://155.178.201.160/d-tpp/1712/xml_data/d-TPP_Metafile.xml

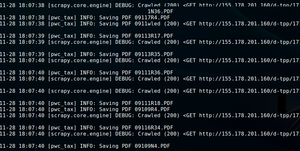

Scraping

import os

import urlparse

import scrapy

from scrapy.crawler import CrawlerProcess

from scrapy.http import Request

ITEM_PIPELINES = {'scrapy.pipelines.files.FilesPipeline': 1}

def createFolder(directory):

try:

if not os.path.exists(directory):

os.makedirs(directory)

except OSError:

print ('Error: Creating directory. ' + directory)

class dTPPSpider(scrapy.Spider):

name = "pwc_tax"

allowed_domains = ["155.178.201.160"]

start_urls = ["http://155.178.201.160/d-tpp/1712/"]

def parse(self, response):

for href in response.css('a::attr(href)').extract():

yield Request(

url=response.urljoin(href),

callback=self.save_pdf

)

def save_pdf(self, response):

path = response.url.split('/')[-1]

self.logger.info('Saving PDF %s', path)

with open('./PDF/'+path, 'wb') as f:

f.write(response.body)

process = CrawlerProcess({

'USER_AGENT': 'Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1)'

})

createFolder('./PDF/')

process.crawl(dTPPSpider)

process.start() # the script will block here until the crawling is finished

Downloading

Converting to images

Uploading to the GPU

Classification

OCR

Prerequisites

pip install --user

- requests

- pdf2image

Code

See also

- https://github.com/euske/pdfminer

- https://dzone.com/articles/pdf-reading

- https://automatetheboringstuff.com/chapter13/

- https://www.binpress.com/tutorial/manipulating-pdfs-with-python/167

- https://github.com/pmaupin/pdfrw