Howto:Processing d-tpp using Python

| This article is a stub. You can help the wiki by expanding it. |

If processing actual PDFs to "retrieve" such navigational data procedurally is ever supposed to "fly", I think it would have to be done using OpenCV running in a background thread (actually a bunch of threads in a separate process), in essence using machine learning, basically feeding it a bunch of manually-annotated PDFs, segmenting each PDF into sub-areas (horizontal/vertical profile, frequencies, identifier etc) and running neural networks.

Basically, such a thing would need to be very modular to be feasible, in essence parallel processing of the rasterized image on the GPU, to split the chart into known components and retrieve the identifiers, frequencies, bearings etc that way (in essence requiring an OCR stage too).

It is kind of an interesting problem and it would address a bunch of legal issues, too. Just like downloading such data from the web works for a reason, but it would definitely be a rather complex piece of software I believe, and we would want to get people involved familiar with machine learning and computer vision (OpenCV). It is kinda a superset of doing OCR on approach charts, in essence not just looking for a character set, but an actual document structure and "iconography" for airports, navaids, route markers and so on.

Motivation

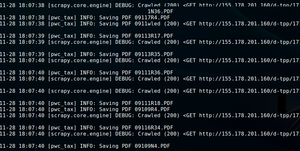

Come up with the Python machinery to automatically download aviation charts and classify them for further processing/parsing (data extraction): http://155.178.201.160/d-tpp/

We will be downloading two different AIRAC cycles, for example at the time of writing cycles 1712 and 1713:

Each directory contains a set of charts that will be post-processed by converting them to raster images.

Data sources

Chart classification

- STARs

- Standard Terminal Arrivals

- IAPs

- Instrument Approach Procedures

- DPs

- Departure Procedures

Modules

XML Processing

http://155.178.201.160/d-tpp/1712/xml_data/d-TPP_Metafile.xml

Scraping

| Note This will download roughly 4 GB of data in ~17'000 files for each AIRAC cycle! |

- This should support caching

- And interrupting/resuming scraping

- Alternatively use a media pipeline [1]

import os

import urlparse

import scrapy

from scrapy.crawler import CrawlerProcess

from scrapy.http import Request

ITEM_PIPELINES = {'scrapy.pipelines.files.FilesPipeline': 1}

def createFolder(directory):

try:

if not os.path.exists(directory):

os.makedirs(directory)

except OSError:

print ('Error: Creating directory. ' + directory)

class dTPPSpider(scrapy.Spider):

name = 'dTPPSpider'

# https://doc.scrapy.org/en/latest/topics/settings.html

custom_settings = {

'HTTPCACHE_ENABLED': True,

'HTTPCACHE_STORAGE': 'scrapy.extensions.httpcache.FilesystemCacheStorage',

'HTTPCACHE_POLICY': 'scrapy.extensions.httpcache.RFC2616Policy'

}

allowed_domains = ["155.178.201.160"]

start_urls = [ "http://155.178.201.160/d-tpp/1712/",

"http://155.178.201.160/d-tpp/1713/"]

def parse(self, response):

for href in response.css('a::attr(href)').extract():

yield Request(

url=response.urljoin(href),

callback=self.save_pdf

)

def save_pdf(self, response):

directory = './PDF/'

path = response.url.split('/')[-1]

cycle = response.url.split('/')[-2]

createFolder(directory)

createFolder(directory+cycle)

# TODO: split folder (AIRAC cycle)

self.logger.info('Saving PDF %s (cycle:%s)', path, cycle)

with open(directory + '/'+cycle+'/' + path, 'wb') as f:

f.write(response.body)

process = CrawlerProcess({

'USER_AGENT': 'Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1)'

})

process.crawl(dTPPSpider)

process.start() # the script will block here until the crawling is finished

Converting to images

| Note By default, all PDF files will be 387 x 594 pts (use pdfinfo to see for yourself).[2] |

pip3 install pdf2image

from pdf2image import convert_from_path, convert_from_bytes

import tempfile

with tempfile.TemporaryDirectory() as path:

images_from_path = convert_from_path('/folder/example.pdf', output_folder=path)

# Do something here

Simplification / Feature Extraction

We can easily simplify our charts by creating thumbnails for each chart and merging all files into a larger image/texture.

Image Randomization

Since we only have very little data we need to come up with artificial data fo training purposes. We can do so by randomizing our existing image set to create all sorts of "charts", For example by transforming/re-scaling our images or changing their aspect ratio:

Uploading to the GPU

Classification

OCR

We dont just need to do character recognition, but also deal with aviation specific symbology/iconography. Once again, we can refer to PDF files for the specific symbols.[3]

Prerequisites

pip install --user

- requests

- pdf2image

References

External links

Python resources

See also

- https://github.com/euske/pdfminer

- https://dzone.com/articles/pdf-reading

- https://automatetheboringstuff.com/chapter13/

- https://www.binpress.com/tutorial/manipulating-pdfs-with-python/167

- https://github.com/pmaupin/pdfrw