Project Rembrandt

Why this name ?

Rembrandt was a dutch painter living in 17th century, famous as one of the master of chiaroscuro.

This project is about changing the way FlightGear renders lights, shadows and shades, and aims at making Rembrandt painting style possible in FG.

What is it

The idea driving the project is to implement deferred rendering inside FlightGear. From the beginning FlightGear had a forward renderer that tries to render all properties of an object in one pass (shading, lighting, fog, ...), making difficult to render more sophisticated shading (see the 'Uber-shader') because one have to take into account all aspects of the rendering equation.

On the contrary, deferred rendering is about separating operations in simplified stages and collect the intermediary results in hidden buffers that can be used by the next stage.

- First stage is the Geometry Stage

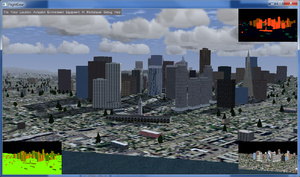

- we render all the scene into 4 textures, using multi render targets, to do it in one pass: one for the depth buffer, one for the normals (lower left of the image), one for the diffuse colors (lower right) and one for the specular colors (upper right).

- Next stage is the Shadow Stage

- we render the scene again into a depth texture from the point of view of the lights. There will be one texture for every light casting shadows.

- Then comes the Lighting Stage, with several substages

-

- Sky and cloud pass: the sky and the clouds are first drawn using classical method.

- Ambient pass: the diffuse buffer is modulated with the ambient color of the scene and is drawn as a screen-aligned textured quad

- Sunlight pass: a second screen aligned quad is drawn and a shader computes the view position of every pixel to compute its diffuse and specular color, using the normal stored in the first stage. The resulting color is blended with the previous pass. Shadows are computed here by comparing the position of the pixel with the position of the light occluder stored in the shadow map.

- Additional light pass (to be implemented): the scene graph will be traversed another time to display light volumes (cone or frusta for spot lights, sphere for omni-directional lights) and their shader will add the light contributed by the source only on pixels receiving light.

- Fog pass: a new screen aligned quad is draw and the position of the pixel is computed to evaluate the amount of fog the pixel has. The fog color is blended with the result of the previous stage.

- In the end, the Display Stage, with optional Post-Processing effect

- The results of the previous buffers are pushed to the main framebuffer to be displayed, optionally modified to show Glow, Motion blur, HDR, redout or blackout, anti-aliasing, etc...

In FG, we end the rendering pipeline by displaying the GUI and the HUD.

All these stages are more precisely described if this tutorial that is the basis of the current code, with some addition and modifications.

Caveats

Deferred rendering is not capable to display transparent. For the moment, clouds are renderer separately and should be lit and shaded by their own. Transparent surfaces are alpha-tested and not blended. They would have to be drawn in their own bin over the composited image.

It also don't fit with depth partitioning because the depth buffer should be kept to retain the view space position, so for the moment, z-fighting is quite visible. Depth partitioning with non overlapping depth range might be the solution and should be experimented at one point.