Compositor: Difference between revisions

(→FAQs) |

No edit summary |

||

| Line 1: | Line 1: | ||

{{Template:Non-stable|version=2019.3|progress=60}} | |||

{{infobox subsystem | {{infobox subsystem | ||

|image = Canvas-view-element-prototype-by-icecode gl.png | |image = Canvas-view-element-prototype-by-icecode gl.png | ||

Revision as of 11:46, 3 November 2018

| This article describes content/features that may not yet be available in the latest stable version of FlightGear (2020.3). You may need to install some extra components, use the latest development (Git) version or even rebuild FlightGear from source, possibly from a custom topic branch using special build settings: This feature is scheduled for FlightGear 2019.3. If you'd like to learn more about getting your own ideas into FlightGear, check out Implementing new features for FlightGear. |

| |

| Started in | 01/2018 |

|---|---|

| Description | Dynamic rendering pipeline configured via the property tree |

| Maintainer(s) | none |

| Contributor(s) | Icecode |

| Status |

|

| Topic branches: | |

| $FG_SRC | https://sourceforge.net/u/fgarlin/flightgear-src/ci/new-compositor/tree/ |

| fgdata | https://sourceforge.net/u/fgarlin/flightgear/ci/new-compositor/tree/ |

A Compositor is a rendering pipeline configured by the Property Tree. Every configurable element of the pipeline wraps an OpenGL/OSG object and exposes its parameters to the property tree. This way, the rendering pipeline becomes dynamically-reconfigurable at runtime.

Related efforts: Howto:Canvas View Camera Element

Background

First discussed in 03/2012 during the early Rembrandt days, Zan came up with patches demonstrating how to create an XML-configurable rendering pipeline.

Back then, this work was considered to look pretty promising [1] and at the time plans were discussed to unify this with the ongoing Rembrandt implementation (no longer maintained).

Adopting Zan's approach would have meant that efforts like Rembrandt could have been implemented without requiring C++ space modifications.

Rembrandt's developer (FredB) suggested to extend the format to avoid duplicating the stages when you have more than one viewport, i.e. specifying a pipeline as a template, with conditions like in effects, and have the current camera layout refer the pipeline that would be duplicated, resized and positioned for each declared viewport [2]

Zan's original patches can still be found in his newcameras branches which allow the user to define the rendering pipeline in preferences.xml:

At that point, it didn't have everything Rembrandt's pipeline needs, but most likely could be easily enhanced to support those things.

Basically the original version added support for multiple camera passes, texture targets, texture formats, passing textures from one pass to another etc, while preserving the standard rendering line if user wants that. [3]

In 02/2018, Icecode GL re-implemented a more generic version of Zan's idea using Canvas concepts, which means the rendering pipeline is not just xml-configurable, but using listeners to be dynamically-reconfigurable at runtime via properties.

The corresponding set of patches (topic branches) were put up for review in 08/2018 to discuss the underlying approach and hopefully get this merged in 2019.

Status

08/2018

Fernando has been working on and off on multi-pass rendering support for FlightGear since late 2017. It went through several iterations and design changes, but he think it's finally on the right track. It's heavily based on the Ogre3D Compositor and inspired by many data-driven rendering pipelines. Its features include:

- Completely independent of other parts of the simulator, i.e. it's part of SimGear and can be used in a standalone fashion if needed, ala Canvas.

- Although independent, its aim is to be fully compatible with the current rendering framework in FG. This includes the Effects system, CameraGroup, Rembrandt and ALS (and obviously the Canvas).

- Its functionality overlaps Rembrandt: what can be done with Rembrandt can be done with the Compositor, but not vice versa.

- Fully configurable via a XML interface without compromising performance (ala Effects, using PropertyList files).

- Optional at compile time to aid merge request efforts.

- Flexible, expandable and compatible with modern graphics.

- It doesn't increase the hardware requirements, it expands the hardware range FG can run on. People with integrated GPUs (Intel HD etc) can run a Compositor with a single pass that renders directly to the screen like before, while people with more powerful cards can run a Compositor that implements deferred rendering, for example.

Unlike Rembrandt, the Compositor makes use of scene graph cameras instead of viewer level cameras.

This allows CameraGroup to manage windows, near/far cameras and other slaves without interfering with what is being rendered (post-processing, shadows...).

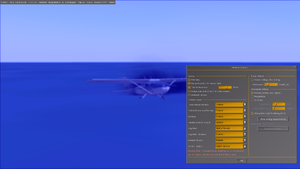

The Compositor is in an usable state right now: it works but there are no effects or pipelines developed for it. There are also some bugs and features that don't work as expected because of some hardcoded assumptions in the FlightGear Viewer code. Still, I think it's time to announce it so people much more knowledgeable than me can point me in the right direction to get this upstream and warn me about possible issues and worries. :)

The next major (and hopefully final) iteration of the Compositor is mostly done now, just some more cleanup is needed. I've tried to keep the changes contained in CameraGroup.cxx/hxx and in new files in SimGear to ease the merging process.

Use Cases

- tail cameras

- mirrors

- Procedural Texturing

- Shuttle ADI ball

FAQs

I started the Compositor to hopefully unify rendering in FG in the most non-radical way. Instead of changing a large part of the codebase like Rembrandt, the Compositor aims to bring the same funcionality and slowly deprecate parts of the FG Viewer code.

Indeed, this should help bring more interesting effects to the table. I'm specially looking forward to porting ALS to a multi-pass environment through PBR.

Shadows

It includes shadows indeed. The Compositor stacks several passes of different types on top of each other, and exchanges buffers between them. Nothing prevents someone from creating a new pass type that implements a shadow map pre-pass. I've already tried myself successfully, but the problem is telling the pass which light should be used, which brings me to spotlights.

Light Sources

Allowing aircraft/scenery developers to include "real" lights is a whole different aspect of the scene graph that shouldn't be managed by the Compositor. Some kind of system that exposes OpenGL lights should be implemented and linked to the Compositor. Some decisions have to be made though. In a forward renderer lights aren't as cheap as in a deferred renderer, so if the aircraft developer says there has to be a light there, someone using a forward renderer might see a performance drop or not see them at all.

Show me the code

I've submitted all I have to my FG fork:

https://sourceforge.net/u/fgarlin/simgear/ci/new-compositor/tree/

https://sourceforge.net/u/fgarlin/flightgear-src/ci/new-compositor/tree/

https://sourceforge.net/u/fgarlin/flightgear/ci/new-compositor/tree/

I haven't had the time to update the repos to the latest changes in the official repos, but it shouldn't matter for now.

Merging

I'd love to have this merged as soon as possible, but maybe next month is a bit too rushed. I don't even know if the approach I'm using will be the definitive one or the small implications the Compositor might have on the rest of the viewer related code. I'm not in a rush though, I can wait for the next release if I can't make a decent enough merge request in June/July.

Elements

Buffers

A buffer represents a texture or, more generically, a region of GPU memory. Textures can be of any type allowed by OpenGL: 1D, 2D, rectangle, 2Darray, 3D or cubemap.

A typical property tree structure describing a buffer may be as follows:

<buffer>

<name>buffer-name</name>

<type>2d</type>

<width>512</width>

<height>512</height>

<scale-factor>1.0</scale-factor>

<internal-format>rgba8</internal-format>

<source-format>rgba</source-format>

<source-type>ubyte</source-type>

</buffer>

Passes

A pass wraps around an osg::Camera. As of February 2018, there are two types of passes supported:

- scene. Renders a scene. The scene can be an already loaded scene graph node (terrain, aircraft etc.) or a path to a custom 3D model.

- quad. Renders a fullscreen quad with an optional effect applied. Useful for screen space shaders (like SSAO, Screen Space Reflections or bloom) and deferred rendering.

Passes can render to a buffer (Render to Texture), to several buffers (Multiple Render Targets) or directly to the OSG context. This allows chaining of multiple passes, sharing buffers between them. They can also receive other buffers as input, so effects can access them as normal textures.

Example XML for a quad type pass:

<pass-quad>

<name>pass-name</name>

<effect>Effects/test</effect>

<input-buffer>

<buffer>some-buffer</buffer>

<unit>0</unit>

</input-buffer>

<output-buffer>

<buffer>color-buffer</buffer>

<component>color</component>

</output-buffer>

<output-buffer>

<buffer>depth-buffer</buffer>

<component>depth</component>

</output-buffer>

</pass-quad>

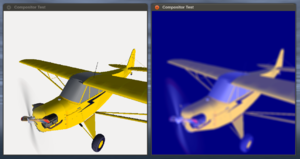

Canvas integration

Apart from serving as a debugging tool for visualizing the contents of a buffer, integrating the Compositor with Canvas allows aircraft developers to access RTT capabilities. Compositor buffers can be accessed within Canvas via a new custom Canvas Image protocol buffer://. For example, the path buffer://test-compositor/test-buffer displays the buffer test-buffer declared in test-compositor.

var (width,height) = (612,612);

var title = 'Compositor&Canvas test';

var window = canvas.Window.new([width,height],"dialog")

.set('title',title);

var myCanvas = window.createCanvas().set("background", canvas.style.getColor("bg_color"));

var root = myCanvas.createGroup();

var path = "buffer://test-compositor/test-buffer";

var child = root.createChild("image")

.setTranslation(50, 50)

.setSize(512, 512)

.setFile(path);Known Issues

- Canvas (sc::Image) doesn't update its texture unless .update() is invoked in Nasal. Is this a feature?

- The scene graph and the viewer are still managed by FGRenderer. Maybe hijacking the init sequence is a good idea?

- light sources ?

Related

References

|